AI Slop Is a Governance Problem. Here Are 4 Principles to Fix It.

AI has made code cheaper than ever. What it didn’t make cheaper is the cost of being wrong.

There’s a word for what happens when that cost goes unpaid: slop. Code that compiles, passes CI, looks reasonable and readable, and yet is brittle under real conditions. Developers already use the term. It’s earned, not imposed. And it describes a growing share of what AI-assisted teams are shipping to production.

In enterprise environments, Developer Experience with AI is partly determined by whether your tech stack, workflows, and team processes make quality enforceable, actionable, explainable, and safe to move fast.

I care about this because I’m a software developer, and I’ve lived the part of “shipping fast” that can be uncomfortable: incidents, rollbacks, postmortems, and defending architectural decisions.

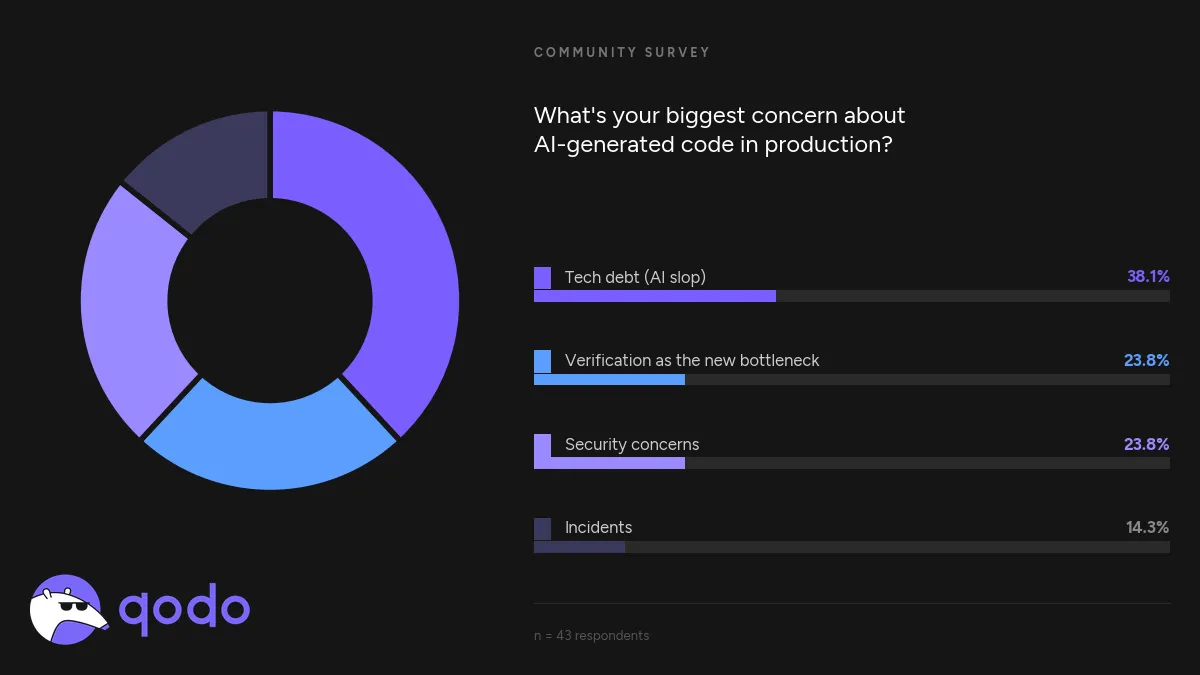

In a recent community poll, we asked developers what concerns them most about AI-generated code in production. The top answer, at 38.1%, was tech debt. Which is what the community is calling “AI slop”. Verification bottlenecks and security tied for second at 23.8%. Incidents came last. The thing developers fear most is the slow accumulation of code that nobody fully understands!

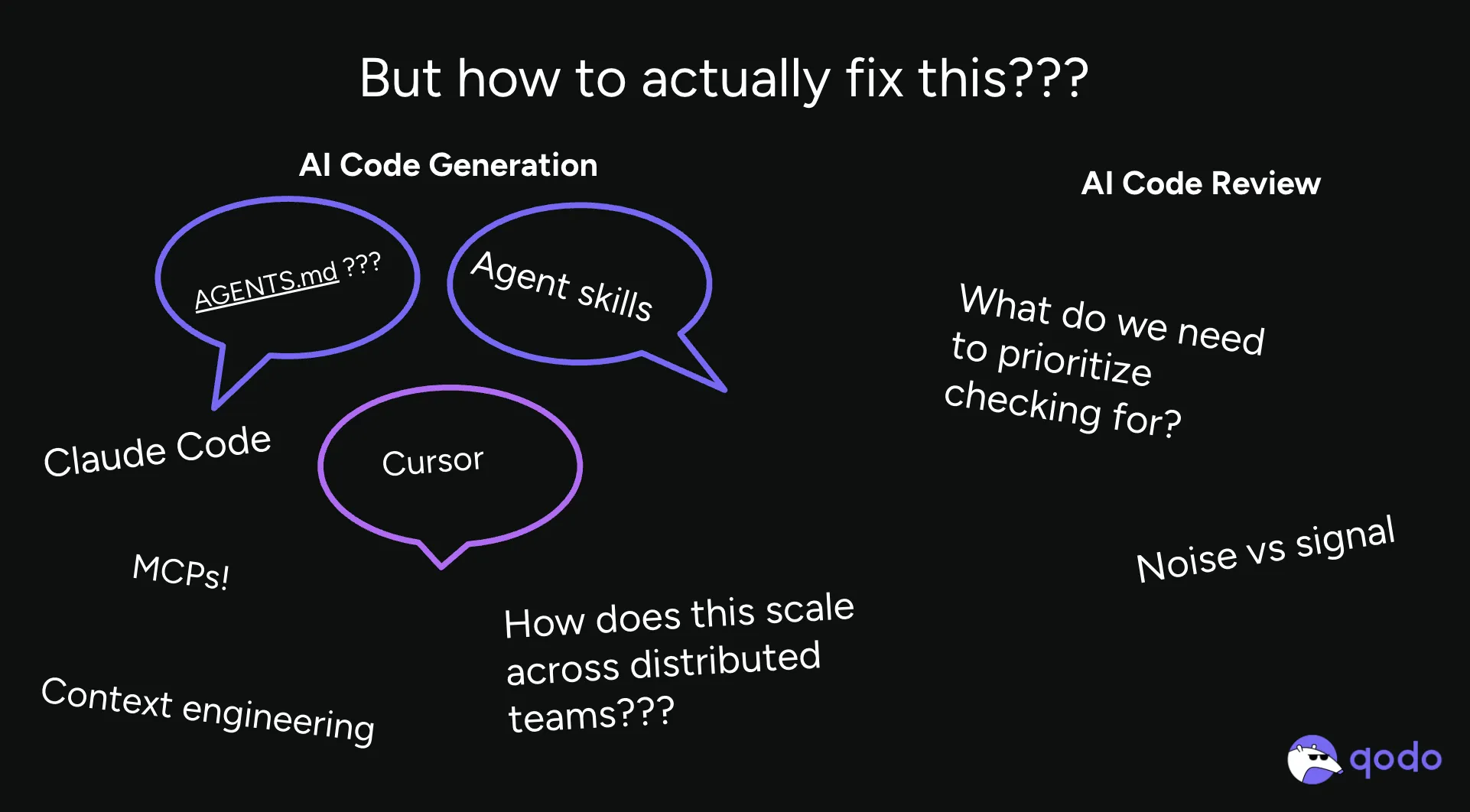

The AI coding agent obsession: speed you can’t stand behind

AI developer tools optimize for one thing: movement. Generate more. Merge faster. Close the ticket. Move on to the next thing.

And trust me, this has been an incredibly exciting time to build. I spent a weekend building an agentic CLI tool to help me write behavior-driven development tests and boost test coverage to 95-100% across repos.

But I don’t measure engineering solely by movement. I measure it by whether or not systems hold up when it’s stressed; when traffic spikes or pods hang, when a new team inherits your service, or when multiple teams rely on services you build. And, of course, when something breaks that’s difficult to triage.

So far, AI has raised code volume, abstraction layers, and distance from execution. This means the cost of missing meticulousness went up. This is where slop comes from. Current LLM limitations mean there are few mechanisms to verify the output at the depth required.

I consider that a trap (or the AI velocity paradox): “fast” starts to feel like “safe,” because the code compiles and CI is green. And what can easily happen is teams merge changes they don’t truly understand end-to-end. That is slop at the system level. And I consider that a workflow and tooling gap.

Governance is the trust layer

When I say “AI code governance,” I’m talking about the engineering system and processes that make developers feel like “this is safe to ship.” The “coloring within the lines”. The guardrails that are systematic, intentional, and sometimes automated.

And when issues slip through these guardrails, there are other systems designed to mitigate these issues effectively (remediation techniques).

Here’s how I define it:

- Code governance is the system of standards, controls, and ownership that makes quality enforceable at scale.

- Controls are repeatable mechanisms that prevent or surface risk (gates, policies, required checks).

- Quality signals are measurable indicators of maintainability, security posture, or change safety.

- Auditability is the ability to explain what changed, why, and what risk was assessed.

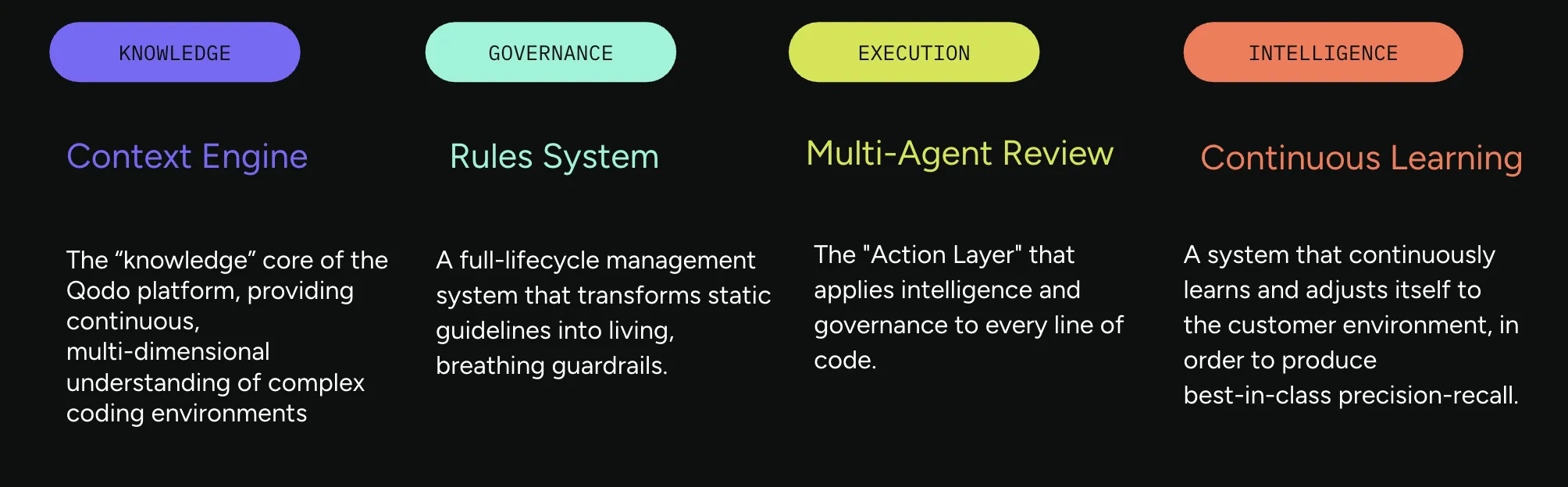

This is the design philosophy behind how we built Qodo. The platform is structured around these three layers: controls through a rules system that codifies how your team writes code, quality signals through a multi-agent review suite that evaluates changes against full codebase context, and auditability through PR memory that preserves what was flagged, what was resolved, and why.

If you can’t explain why code shipped, you introduce liability. And software already has plenty of variables that make it inherently a liability.

AI compounds that equation.

I’m not saying that to sound intense. It’s just that I’m watching teams pay for “unexplained shipping” later, plus tax and interest.

Trustworthiness is designed, not hoped for

A lot of teams try to “trust AI” the way they try to “have a strong engineering culture”: by hoping the right people will do the right thing at the right time.

That doesn’t scale. Not with the volume of changes AI enables.

I’ve come to believe that trustworthiness in AI must be built into the workflow so that the safe path is the default. In the same way, the trustworthiness of code should be demonstrated through verification layers.

What does that mean in practice? It means your developer experience is built around a few non-negotiables. These are the four principles we apply at Qodo and that I use as a framework for thinking about code governance in AI-accelerated environments.

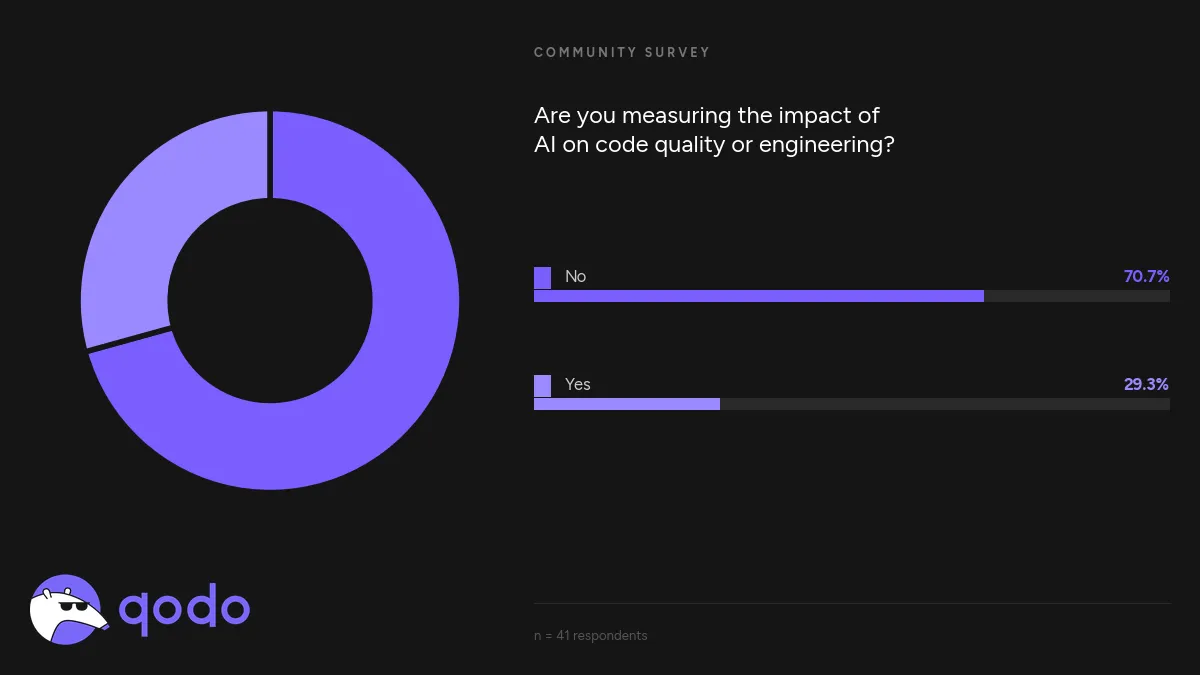

70.7% of the developers we surveyed are not measuring the impact of AI on code quality. That’s the gap between hoping for trustworthiness and designing it. You can’t govern what you don’t measure.

1. Treat comprehension as a requirement

One of my most conservative rules is simple: if code cannot be understood, it cannot be trusted.

Before I ask “Is it fast?” or “Is it clever?” I ask: can a competent engineer understand this under pressure? Is the intent legible without external explanation?

Governance makes comprehension visible and enforceable through standards, review expectations, and signals that reward clarity over cleverness.

This is why Qodo’s rules system starts with discovery. Before you enforce a standard, you have to surface and articulate it. The Discover → Measure → Evolve lifecycle makes comprehension the first requirement: what are our standards, are they being followed, and where do they need to change? If a rule can’t be explained, it shouldn’t be enforced. If code can’t be understood against a standard, it isn’t ready to ship.

2. Treat code review as a responsibility boundary

I don’t treat code review as a courtesy or a checkbox. I treat it as a boundary: the moment responsibility re-enters the system.

When humans stop writing every line of code, it becomes easier for accountability to quietly disappear. Good governance prevents that. It makes review unavoidable at the right moments, it surfaces risk clearly, and it discourages rubber-stamping.

This is also where trust gets built: by forcing the reasoning to show up before the merge does.

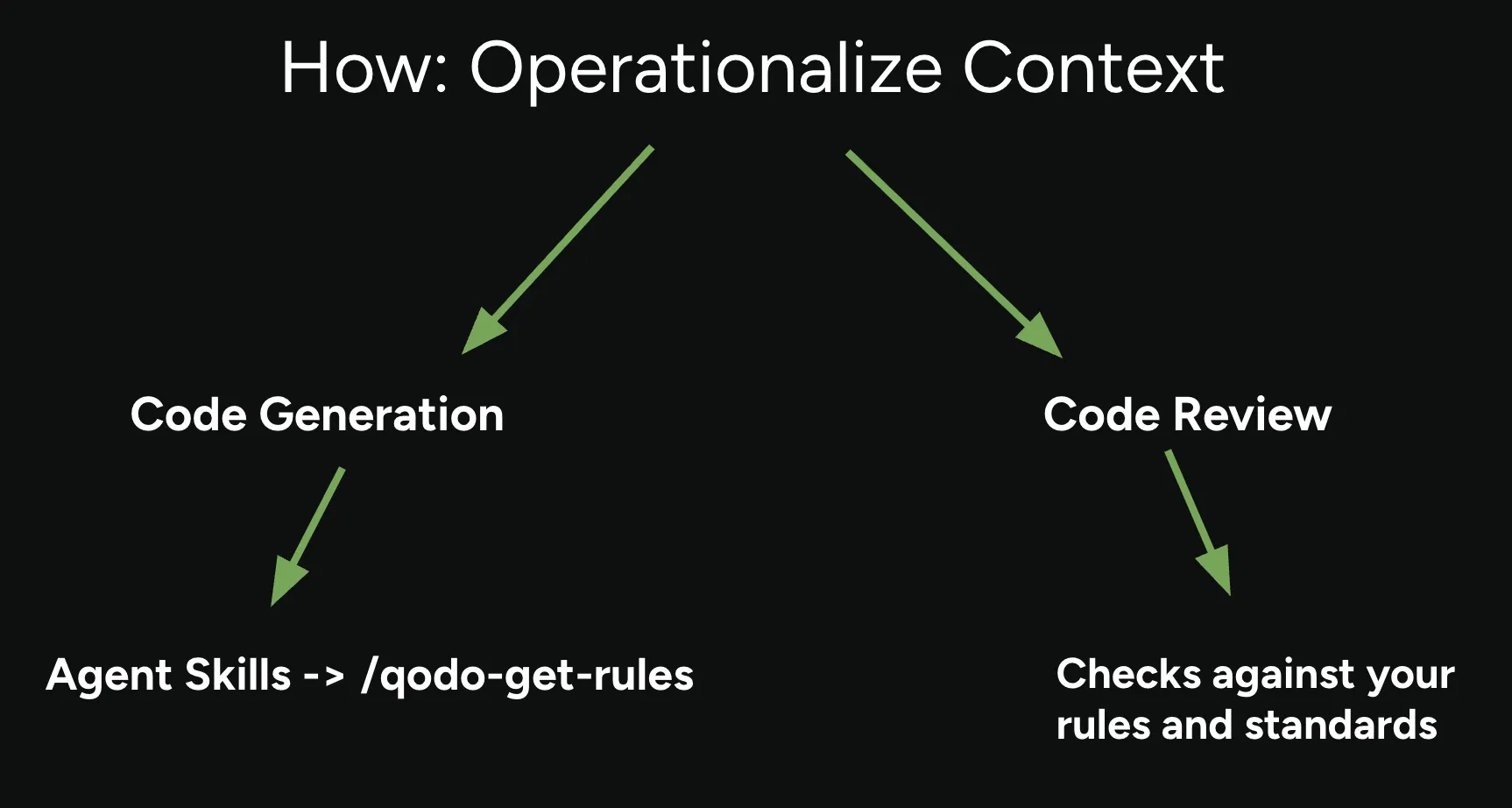

This is the architectural principle behind separating code generation from code review. The same system that writes the code shouldn’t grade its own homework.

The closed loop; rules inform code generation via agent skills like qodo-get-rules, then code review checks against those same rules and standards. Rules → Review → PR History → Rules.

Qodo’s review agent suite operates as an independent verification layer: multiple specialized agents (Critical Issues, Duplicated Logic, Breaking Changes, Ticket Compliance, Rules Enforcement), each focused on a different failure mode, working against the full codebase context rather than just the diff.

The review becomes a genuine boundary of responsibility because it’s informed by something the generator never had: your codebase history, your prior PR decisions, and your team’s standards, all managed in a context engine built for scale and distributed teams.

3. Risk must be visible early

Hidden risk is more dangerous than known risk.

AI makes it easier to ship changes that work today but are fragile tomorrow because risk hides inside abstraction, coupling, and unreadable intent. Governance is how you stop deferring that discovery until the worst possible time.

The fragmentation shows up as scattered standards, inconsistent rules files, and AI tools that each apply different assumptions. The root cause is a context engineering problem — and it compounds at scale. Qodo’s Context Engine addresses this by indexing across repositories, pulling from codebases, agent skills, PR history, rules, and business requirements to give review agents the full picture rather than just a fragment.

In practice, this is what “quality signals” are for: indicators that tell you whether a change is likely to be safe to ship and safe to modify later.

4. Automation must preserve discernment

I’m not anti-automation. I’m anti-checking out.

The question is not whether automation is possible. The question is whether it preserves or erodes trust. Tools should amplify human discernment, not replace it.

I want systems where it’s normal—and supported—to challenge what the tool suggested, to ask “what assumption is this making?”, and to slow down intentionally when the blast radius is high.

Why engineering leaders should care (and why I do)

Poor code quality doesn’t stay in engineering. It shows up as slower teams, higher incident costs, brittle systems, and lost trust—internally and externally.

Enterprises don’t just ship code. They have to defend it: to security, to customers, to future teams, and yes, sometimes to auditors. When your engineering org is AI-accelerated, that need intensifies.

This is why I keep coming back to that litmus test:

If you can’t explain why code shipped, you don’t have velocity. You have liability.

Because the AI developer experience is contingent upon the ability to move quickly without being afraid of what you just merged.

If your org doubles the amount of AI-assisted code it ships this year, will your experience yield confidence in your work, or will it produce a larger pile of changes that nobody can fully explain for months to come in production?