Your AI Dev Workflow Is Broken If the Wrong Package Can Still Ship

Code integrity extends through packaging and release, not just code generation.

The easy takeaway from a release incident is “automate more.”

The better takeaway is harder: govern the publish path so the wrong artifact cannot ship.

A recent packaging incident in the AI coding market brought the problem back to light. The tempting response is to treat it as a one-off deploy mistake or a reminder to remove one manual step. That lesson is too small.

The issue is that the release path was weak enough that the wrong artifact could move through it at all.

That distinction is critical. Many AI coding conversations end too early. We talk about planning, generation, review, and verification during implementation. Then we treat packaging and release as a separate operational detail.

They are not. If code integrity matters, it has to matter all the way to the shipped artifact.

Why “automate more” is too vague

Automation is not the same thing as governance.

A weak workflow does not become safe just because a machine runs it faster. In aviation, autopilot is not a substitute for flight discipline. It works because the system around it is instrumented, constrained, and monitored.

Software release pipelines are no different.

If the packaging path is opaque, the publish surface is too broad, or no one verifies the final artifact, then more automation can just mean faster drift.

So when I hear “this step should have been automated,” my next question is:

Automated inside what boundaries?

The problem with that statement is the lack of governed automation.

That framing avoids two lazy conclusions at once. It avoids the idea that manual work is always the enemy. And it avoids the idea that automated pipelines are inherently safe.

Some human checkpoints belong exactly where judgment matters. And a pipeline without boundaries is just an efficient way to ship mistakes.

Strong coding controls can still end in a bad release

A workflow can have strong coding-time controls and still fail at release time.

Based on this incident, teams could easily publish the wrong package if the release path is treated like an afterthought.

That is why code integrity extends beyond source code looking reasonable earlier in the SDLC. It should account for whether the full path from intent to shipped artifact is governable.

Can the team explain what was supposed to ship, what actually shipped, and what evidence verified the difference between the two?

If not, then the integrity boundary ends too early.

This is also why sustainable velocity is a better goal than raw speed. Sustainable velocity means the team can keep shipping without turning every future release into a high-risk event. If the coding workflow is governed, but the release workflow is not, the system still has an integrity gap.

Safe generation does not compensate for unsafe shipping. That gap is where small cracks become structural failures.

What a governed publish pipeline looks like

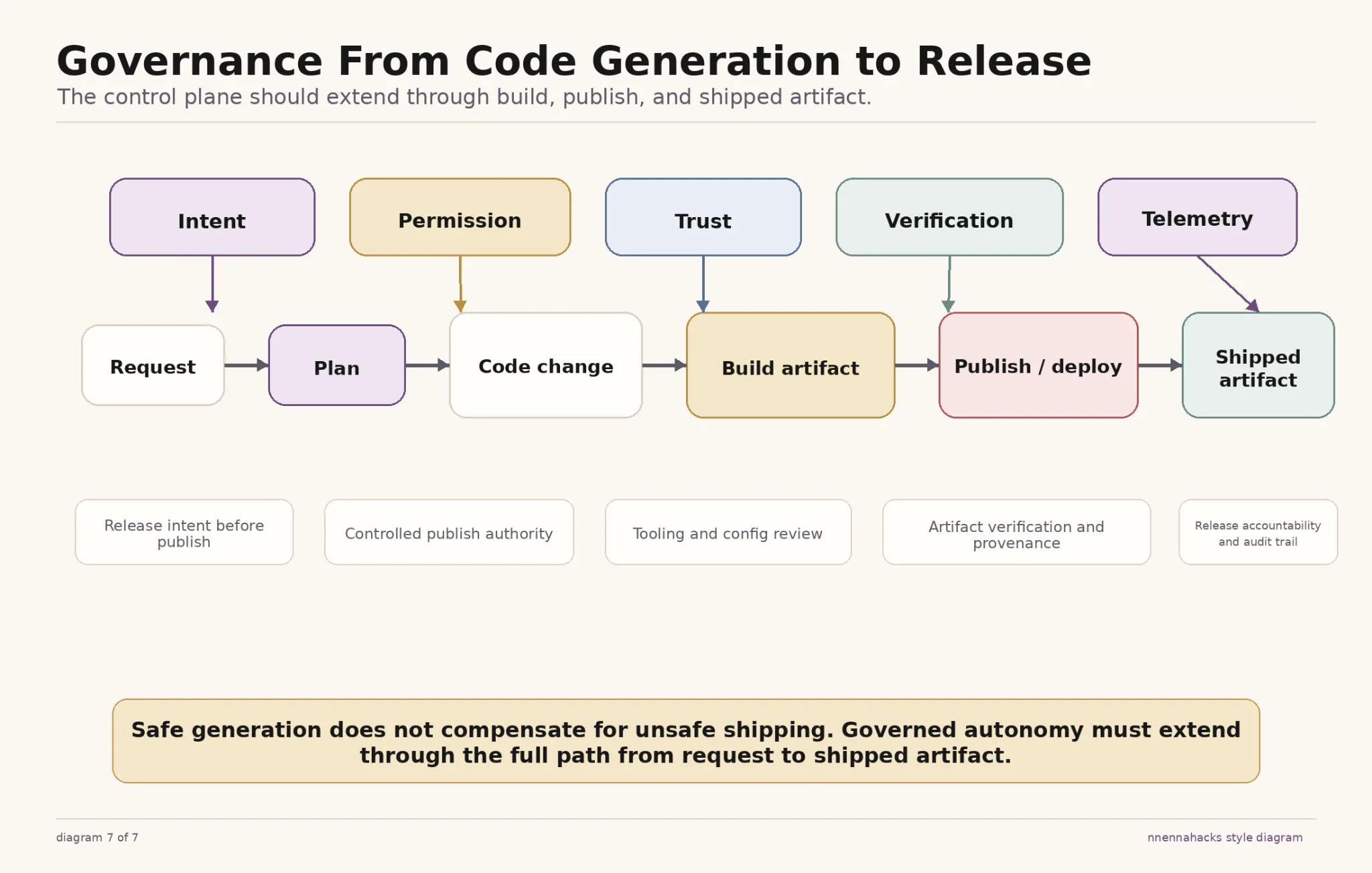

The problem we just described is solved when you apply the code governance lens to the SDLC, as shown in the infographic below. Chances are, you are already doing a lot of this in other parts of SDLC, so you may be off to a good start!

The same control-plane logic that protects coding workflows should also protect package release. Mature systems make risk management legible, which is exemplified in the diagram.

To implement this, start with the governed publish pipeline in the governed autonomy patterns repo we’ve open-sourced. It applies the same control-plane logic to package releases.

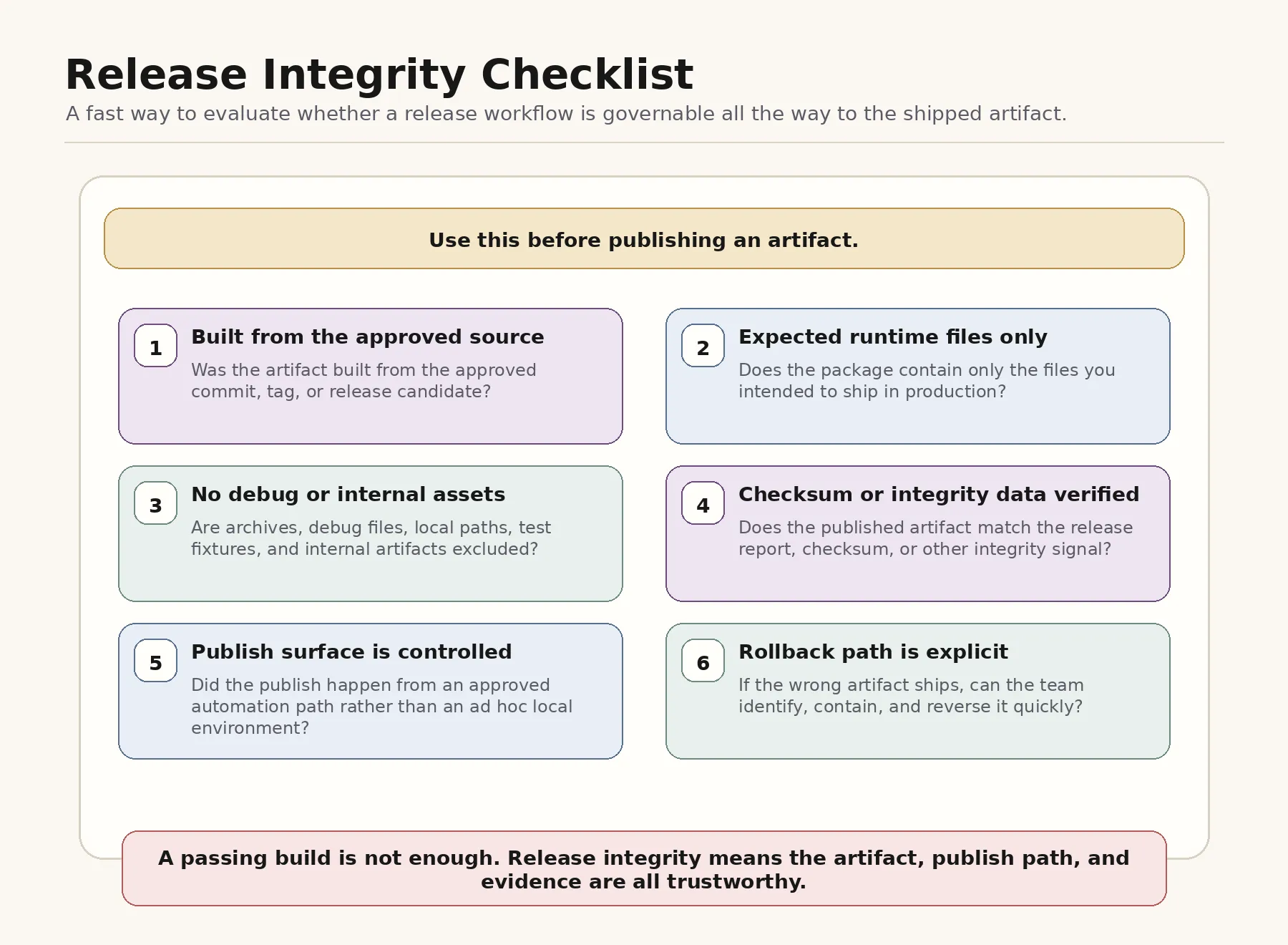

If helpful, you can use this release integrity checklist to verify you’re covering all bases.

A practical starting point

If you already have AI coding tools in production, do one simple exercise.

Read the governed publish pipeline, then open the scorecard and evaluate one release workflow your team already trusts.

Can you see the release plan before publishing? Is the publish surface constrained? Does someone verify the final artifact independently? Do trust-sensitive release changes trigger review? Is there an audit trail if something goes wrong?

If the answers get weaker once the code leaves the editor, that is the integrity gap.

Not every team needs a giant release bureaucracy. But boundaries should extend to the part of the workflow where bad artifacts could become real incidents, like in the case of the Claude Code leak.

Code integrity doesn’t have to stop when code is generated. It stops where teams decide it does.

Resources

Read the governed publish pipeline, then use the scorecard and release checklist to evaluate one release workflow your team already trusts. Compare your coding-time controls to your release-time controls, and identify any gaps.