7 Ways to Embed AI Code Review Into Your Dev Workflow

AI is changing what gets caught in code review, when review happens, who conducts it, and what “ready to merge” means on dev teams.

From what I’m seeing in the field and through my own workflows, I’m going to show you 7 ways to integrate AI code review into your development process, from local development through merge. The goal here is confidence in a strong code quality workflow that feels seamless and easy to try out yourself.

1. Use AI review as a pre-flight check before opening a PR

The old flow: write code → push branch → open PR → wait for reviewers.

The better flow: write code → run AI review locally → fix what’s obvious → then open a PR.

Running a local review pass before you open a PR shifts accountability earlier. You catch your own bugs, rule violations, and missing edge cases before another dev ever sees the diff. A colleague then arrives to a cleaner PR and can spend their time on architecture and intent.

With Qodo’s IDE Plugin, this happens in VS Code or JetBrains as you work. You don’t context-switch to a browser or wait for a CI run. The review is right there in the environment where you wrote the code.

How to start: Install the IDE Plugin. Run a review on your branch before opening any PR this week. Track how many issues you address before human review. Adjust your definition of “ready to open PR” accordingly.

2. Triage findings by severity. Don’t treat all comments as equal.

AI code review generates a lot of output. The teams that get value from it aren’t the ones who fix everything. They’re the ones who read findings with a risk lens.

Structure your triage around three tiers:

- Action Required: merge blockers. Fix before this PR ships.

- Review Recommended: high-signal issues worth addressing in this PR if you have capacity.

- Other / Minor: low-priority style or cosmetic notes. Batch these, schedule them, or consciously accept low risk.

Qodo’s agentic code suggestions are already structured this way. Findings include agent labels that tell you what type of issue was detected (bug, rule violation, requirement gap, security, etc.) and severity tags that tell you how urgently it needs attention. You’re reading a prioritized, categorized brief.

How to start: Next time you open a Qodo review, read only the Action Required findings first. Resolve those. Then work through Review Recommended. Build a habit of explicit, tiered triage instead of top-to-bottom scrolling.

3. Embed your coding rules into code review

Coding standards and rules are usually written down somewhere, but not always in a place where AI can read and enforce across a team.

The typical state: standards live in linters, in PR comments, in internal docs, and buried in many other places. When a developer (or an AI coding assistant) writes code, those standards typically don’t travel with the request.

What I’ve realized is manually creating and updating rules is tedious. It also never seems to be “complete” and the rules decay the moment the codebase evolves.

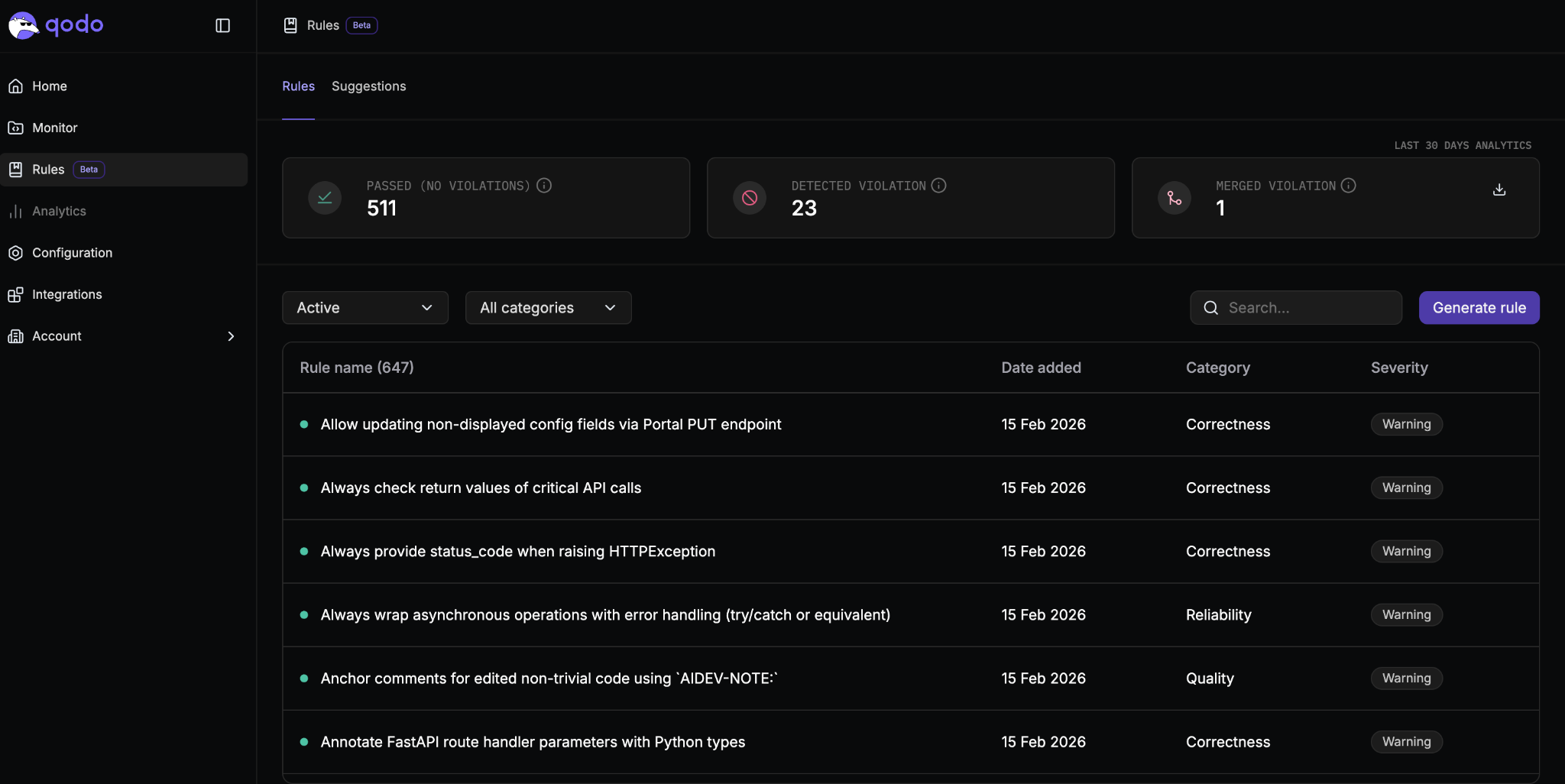

Qodo’s rule system is a great solution to this, as it analyzes your codebase and past PR history to automatically surface patterns that represent how your team writes code. It finds standards already embedded in your commits and review decisions. Then it makes them explicit and enforceable in PRs.

This is the Discover phase of the rule lifecycle (Discover → Measure → Evolve). It answers the question: what are we already implicitly enforcing?

How to start: Review the Rules section in Qodo’s portal and any potential suggestions that pop up in the Suggestions tab. Review the output as a team. Promote the rules that match your real standards into active enforcement. You’ll likely discover you have more implicit standards than you realized… and that several conflict with each other.

4. Use issue fix prompts and skills instead of fixing from scratch

A lot of time is lost when findings surface and the developer understands the issue, but the path from “I see the problem” to “I have a correct fix” still requires a ton of manual effort. Especially for AI-generated code, where the original reasoning isn’t visible in the diff.

Qodo’s agentic code suggestions include structured remediation steps alongside each finding. When a review agent surfaces a bug or rule violation, it also outputs a structured prompt describing what needs to change and why, designed to be acted on directly or handed to an AI coding agent for a candidate fix.

We also have agent skills to auto-fix PR issues right from your terminal in Claude Code and other AI coding agents. I have a full breakdown of my process in a blog post if you want to check that out.

This closes the loop between detection and remediation. The finding and the fix path arrive together.

And what I personally love is seeing the updates directly in the PR from my coding agent using the PR resolver skill. A fix summary and inline comments! It makes things so much easier to keep track of before merge.

How to start: When Qodo surfaces an issue, read the structured remediation guidance before writing your fix. Use it to either resolve the issue yourself faster,prompt your coding agent directly, or pull down and fix issues with the /qodo-pr-resolver skill. Measure how this changes your time-to-fix on flagged issues compared to your previous baseline.

5. Redefine what “PR ready” means on your team

“PR ready” has traditionally meant when CI is green in a branch.

Add an explicit AI review phase to your definition of “PR ready”:

- AI review has run with full codebase context

- All Action Required findings have been resolved or explicitly acknowledged

- Rule violations from your team’s active rule set have been addressed

This front-loads work that was previously paid in review comments, back-and-forth, and rework post-merge. Qodo’s Git Plugin runs this pass automatically when a PR opens on GitHub, GitLab, Bitbucket, or Azure DevOps, so the baseline check happens without anyone remembering to trigger it.

Write out what “PR ready” means on your team today. Add three lines: AI review has run, Action Required findings are resolved, rules are passing. Share it as a team norm. Review it in your next retro.

6. Track whether your rules are truly working

Rules that nobody checks are rules that decay. You write them, they go active, and then nobody knows whether developers are following them, dismissing them, or never seeing them at all.

Qodo’s rule analytics surface adoption rates, violation trends, and enforcement patterns per rule. This tells you which rules are genuinely high signal and which are generating noise that developers dismiss. Both answers are valuable.

This is the Measure phase of the lifecycle. The data tells you: is this rule working as policy, or is it being treated as a suggestion?

How to start: After your rules are active for two weeks, review the analytics. Flag any rule with high violation rates and low remediation. That’s a signal the rule is either misconfigured or generating too much friction. Flag any rule that’s never violated. It’s worth asking whether it’s genuinely enforced or just never triggered by PRs.

7. Evolve your rules based on what you learn

Coding standards as rules can be a “living” policy. Your codebase changes, your team changes, and your standards change over time. Rules that don’t evolve can lead to “standards drift” pretty easily.

Qodo’s Rule System runs continuously to identify conflicts between active rules, flag duplicates, and surface rules that have become outdated based on current codebase patterns. It proposes updates rather than waiting for someone to notice the rule no longer applies.

This is the Evolve phase of the lifecycle and it’s what makes the system self-maintaining.

The goal is a living standards system: one that reflects how your team actually writes code right now, as opposed to how you intended to write it eighteen months ago.

How to start: Check for flagged conflicts or duplicates of rules. Promote any new patterns from PR history that show up in the list of suggested rules. Treat rule maintenance as part of your engineering standards.

Putting it together

These seven steps aren’t independent features. They’re a loop:

Run local review before PRs → triage by severity → enforce rules that found themselves → remediate with structured guidance → redefine “ready” → measure what’s working → evolve based on what you learn → repeat.

This is how you rebuild your team process around a review loop that’s explicit, measurable, and continuously improving.

Start with step one this week. Add one more next sprint. The compounding effect is real and it shows up in merge confidence, review velocity, and the quality of what ends up reaching production.