The Force Won’t Save Your PR: Catch AI Slop Before It Ships

In this hands-on workshop, Nnenna Ndukwe, Developer Relations Lead at Qodo, and Guy Vago, Senior Solution Architect at Qodo, walked through the full AI-assisted development loop: from selecting the right code generation harness to running local AI code review before a PR is ever opened. Real repos, real bugs, and a live poll that revealed 50% of developers are still running on “prompt and pray.”

Topics covered:

The verification bottleneck is now the slowest part of the SDLC. AI writes code faster than teams can review it. Implementation used to be the constraint. Now it’s verification: determining whether AI-generated code is safe, correct, and aligned with your codebase before it ships.

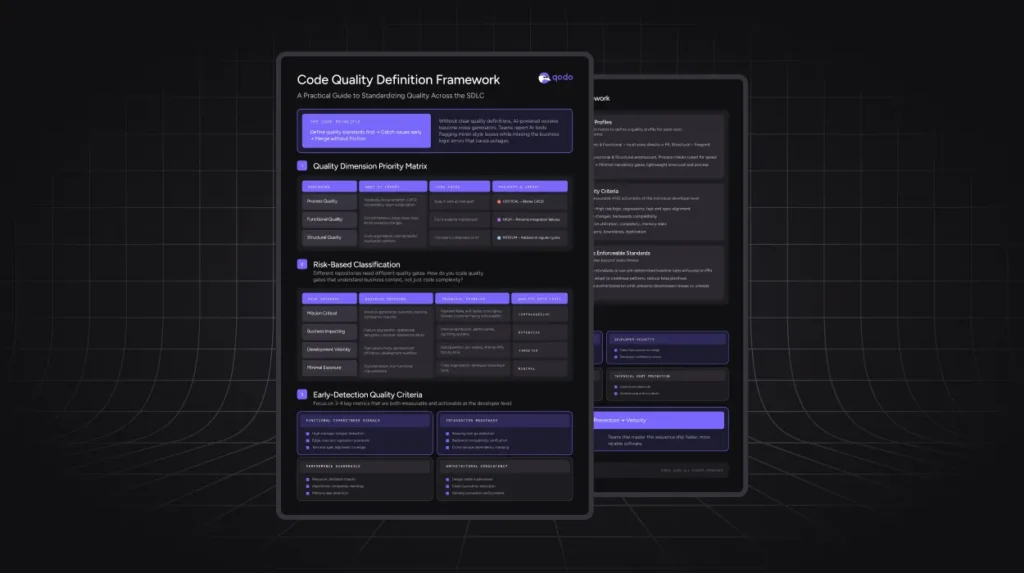

The model isn’t the problem. The harness is. Research shows the same model scores 50+ points lower in a real codebase than on an isolated benchmark. What wraps the model, the spec, the pipeline, the process, determines the quality of what ships. Knowing which harness you’re using also tells you exactly what to look for in review.

Every harness has a slop signature. Spec-first agents implement literally, missing incomplete specs. SDLC-gated agents make reviewers complacent as obvious bugs disappear, leaving subtler issues undetected. Long-arc autonomous agents lose context across phases, allowing early decisions to silently break later ones.

Catch issues before the PR, not during it. Running a local review in the IDE on uncommitted changes surfaces critical bugs before anyone else sees them. The live demo showed how Qodo’s IDE Plugin identifies severity-labeled issues and connects directly to your coding agent for 1-click remediation, before a pull request is ever opened.