How Can AI-Powered Test Coverage Detect PR-Level Gaps Before Merge?

TL;DR

- Code coverage percentages stay the same even when PRs introduce new branches: From a reviewer’s perspective, this creates a false sense of safety because tests still pass while the newly added behavior has never actually run.

- Large PRs and AI-assisted changes modify shared functions rather than adding isolated code: Line and branch-based coverage tools report files as “covered” even though authentication paths, error handling, and environment-dependent logic introduced by the change are never exercised.

- AI-powered test coverage scoped to the pull request makes gaps visible at review time: By tying uncovered paths directly to the diff, reviewers can see which routes, branches, and conditions were introduced and confirm whether any test actually triggers them.

- Qodo catches the coverage gaps before merge: As an AI code review platform, Qodo analyzes the diff to show exactly which new logic paths have never run, allowing reviewers to add targeted tests while context is fresh instead of discovering issues in production.

AI-assisted coding changed pull requests in 2026. What used to touch a few files now spans API contracts, business logic, and edge cases, written in minutes. Based on the global test coverage analytics AI market grew from $1.34 billion in 2024 to $1.67 billion in 2025 (a 24.6% CAGR), and is projected to reach $3.97 billion by 2029. This growth reflects a structural shift: teams are integrating AI into software development faster than they can verify its outputs.

I see this firsthand when I open PRs with healthy coverage percentages and still spend 30 minutes tracing logic to see what’s actually tested. Which conditions were added? Do failure paths run? In large orgs, these compounds: codebases span services, ownership shifts, and reviewers validate code they didn’t write. I’ve traced production incidents to routine refactors, feature flags without tests, and AI code that skipped error handling.

AI-powered test coverage fixes this. Tools like Qodo analyze the code diff, identify new logic paths, and show which branches remain untested before merge. Coverage becomes actionable feedback in the PR, where fixes are still cheap.

Code Coverage vs. Test Coverage: Why the Difference Matters in Reviews

Most teams talk about “test coverage,” but what tools actually report is code coverage. Code coverage measures how much of the application code is executed when tests run, at the level of lines, branches, or functions. If a line executes once, it is marked as covered, regardless of why it executed or what behavior it validated.

Test coverage, on the other hand, is about behavioral validation and code quality. It answers whether tests meaningfully exercise the logic a change introduces: new conditions, error paths, configuration fallbacks, and edge cases. This distinction matters during review because code coverage can remain unchanged even when a pull request introduces new runtime behavior.

That’s where the gap shows up. Existing tests may execute a function, so coverage tools mark the file as covered, while newly added branches inside that function never run. From a code coverage perspective, everything looks fine. From a test coverage perspective, the new behavior is unvalidated.

When I talk about coverage gaps in this post, I’m referring to that second problem. Not missing tests in general, but missing execution of new logic introduced by a specific pull request. AI-powered test coverage analysis focuses on that gap by tying coverage to the code delta, making it clear which behavior was added and whether any test actually exercised it.

How I Use AI-Powered Test Coverage in Reviews

At some point, I stopped treating test coverage as something I looked at after a review and started pulling it into the review itself. When I’m dealing with a PR that touches real logic, conditions, fallbacks, and feature flags, I want to know which parts of that behavior are actually exercised, without spending half an hour tracing paths manually.

I use Qodo in this phase because it stays close to the pull request and the code that changed. It doesn’t ask me to think about the entire repository or historical coverage trends. It looks at the pull request as a unit of change and tries to understand what behavior was introduced or modified.

That matters because most review mistakes I’ve seen do not come from obviously broken code. They come from small, reasonable-looking changes whose impact is easy to underestimate during a fast review.

Coverage Checks Move Earlier in the Workflow

Over time, this shifted coverage checks earlier in my workflow. Instead of merging first and discovering gaps later, I can see them while the PR is still open and the author still has context. Adding a test at that point is straightforward. Fixing it after the merge rarely is.

Remediation Stays Close to the Code

Another thing that helped was how remediation works. When something is flagged, the next step is usually obvious: either the logic can be simplified, or a small test needs to be added to exercise a missed path. I’m not switching tools or opening separate reports. Everything stays close to the code and the review conversation.

Validating AI-Generated Code

What I’ve found most useful (especially with AI-assisted changes) is that Qodo helps me validate behavior I didn’t reason through line by line. AI-generated code often looks clean and confident, but it can skip edge cases or assume inputs behave a certain way. Having coverage feedback tied to those assumptions makes it easier to review the result without having to reverse-engineer every decision the AI coding assistant made.

Example: Reviewing a High-Risk PR with Multiple Architectural Changes

I’m reviewing a pull request in the orders-service codebase. This service sits at the center of the request flow and talks directly to downstream systems like payments-service, which means changes here have a wide blast radius if something slips through.

The PR is titled “Big update with multiple architectural concerns and breaking changes,” and that description is accurate. It introduces an API v2 with breaking response format changes, updates authentication requirements, and adds new service logic around order processing and notifications. Along the way, it modifies shared infrastructure code such as database configuration and request validation (paths that run on nearly every request).

From a review perspective, this is not a change I can validate by scanning a few files and checking whether tests pass. New conditions are introduced, existing assumptions are altered, and behavior now depends more heavily on runtime configuration and downstream responses. That combination is exactly where coverage gaps tend to hide.

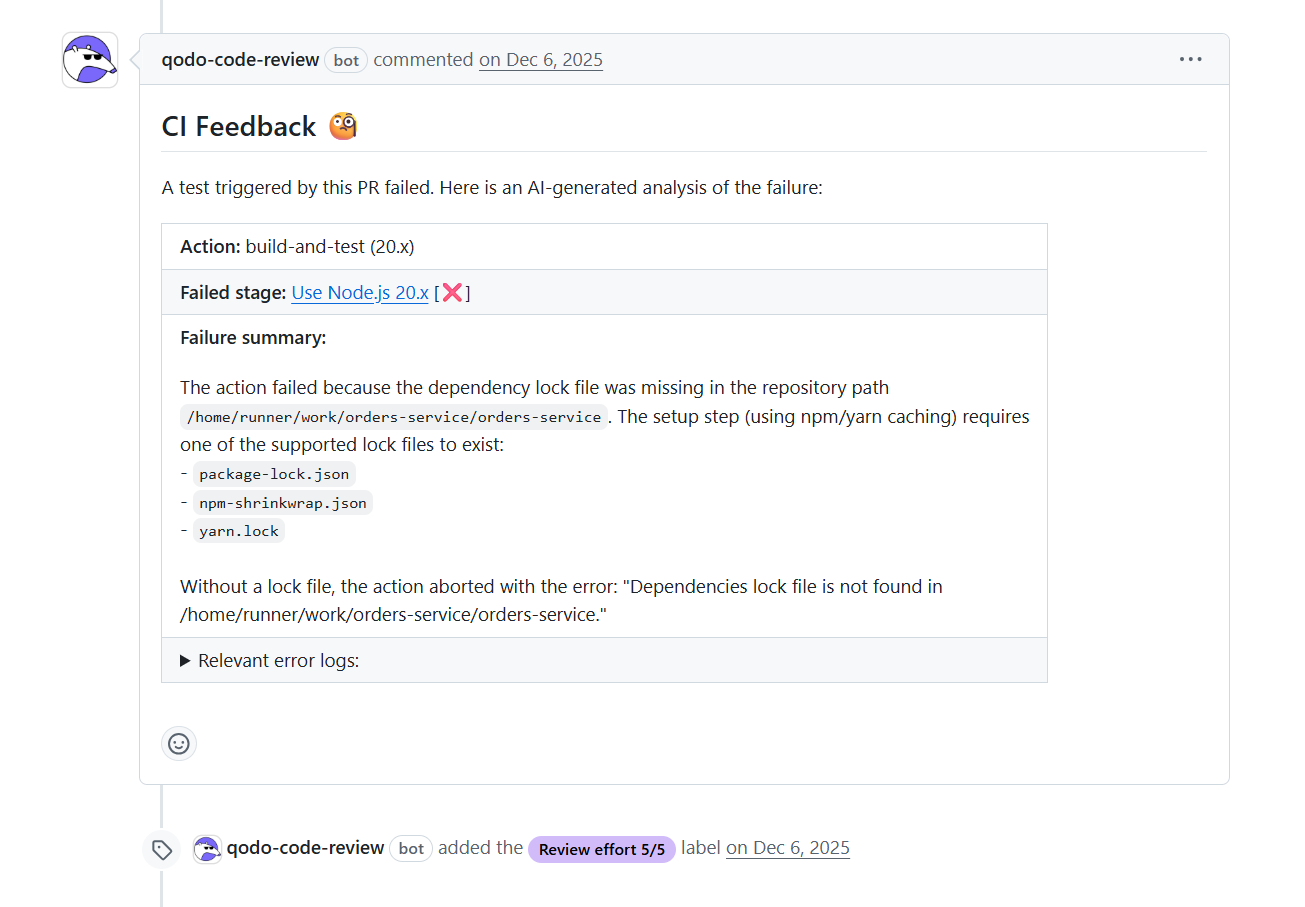

Step 1: CI Failure Shows Infrastructure Gaps

When I’m reviewing this PR, the first thing I notice is that Qodo has already left CI feedback directly on the pull request as shown in the screenshot below:

One of the tests triggered by this change failed, and instead of digging through raw logs, I got a clear explanation of what went wrong and where.

In this case, the failure has nothing to do with business logic. The build failed because the dependency lock file is missing in the orders-service path. That tells me two important things immediately:

- This PR is touching areas of the codebase where build and runtime assumptions matter

- CI failures here are masking more interesting questions I still need to answer about behavior and coverage

What I like about seeing this feedback inline is that it lets me separate concerns quickly. I can fix the mechanical issue (adding the missing lock file) without mentally attributing the failure to test quality or logic correctness.

This is where I deliberately slow down. A CI failure like this is a reminder that the PR has already drifted outside the happy path. If basic setup assumptions were missed, it increases the likelihood that new logic paths were added without being exercised as well. Fixing the lock file gets CI unstuck, but it does not tell me whether the new behavior introduced in this PR is actually covered. This is why integrating test coverage analysis into CI/CD pipelines is critical for catching gaps before merge.

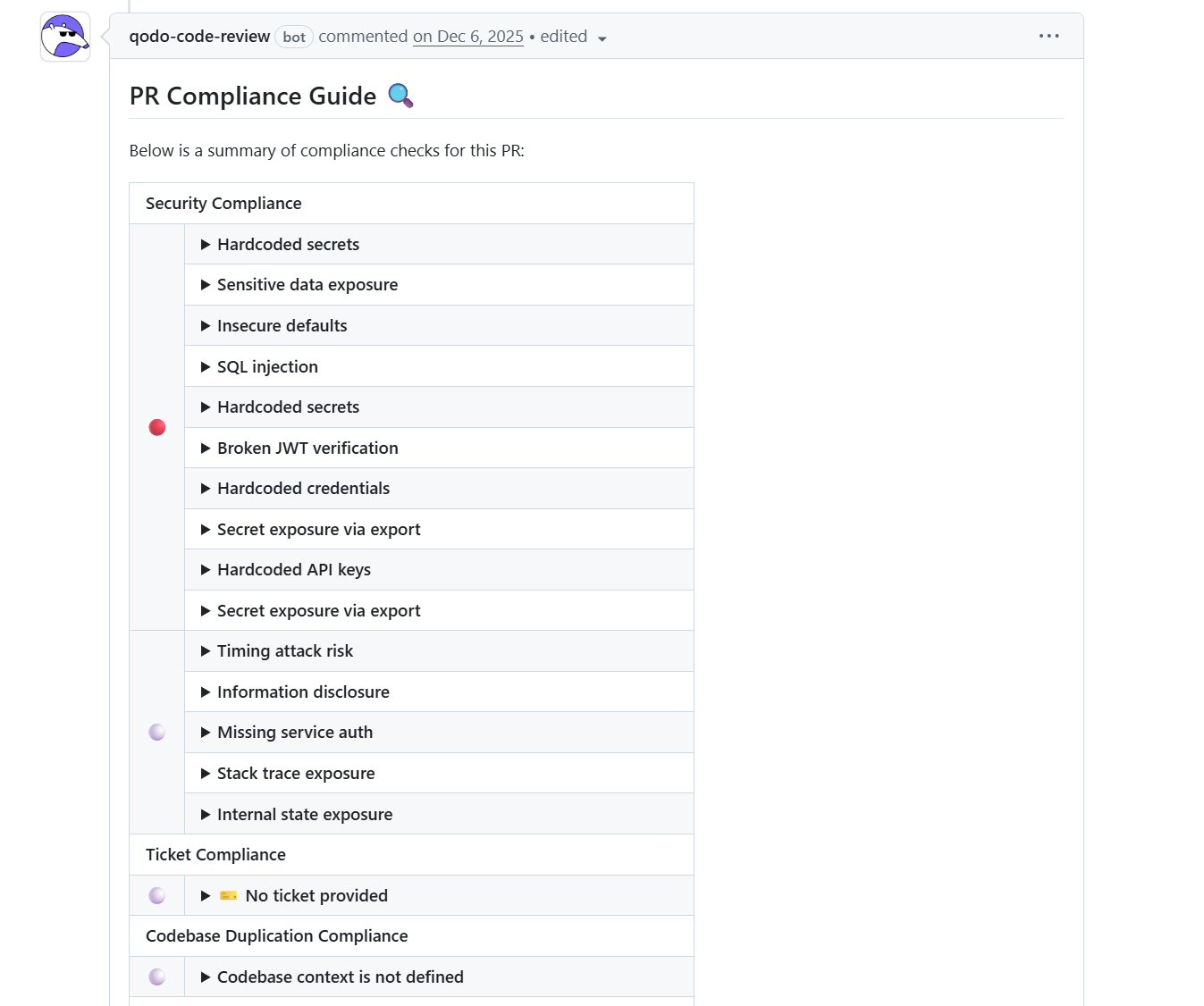

Step 2: Compliance Guide Shows Security-Critical Gaps

When I scroll further down the pull request, Qodo adds a PR Compliance Guide directly into the review thread. Here’s the compliance check added by Qodo:

I don’t treat this as a checklist to blindly clear. I use it to understand where the change is expanding the risk and where assumptions are being introduced without protection.

The first thing that stands out is the concentration of security-related signals:

- Hardcoded secrets

- Sensitive data exposure

- Broken JWT verification

- Missing service-to-service authentication

These are not edge cases. They sit directly on execution paths that were modified in this PR. Seeing them grouped together changes how I read the rest of the diff, because it tells me that configuration and auth logic were not just touched, but reshaped.

What matters from a coverage perspective is not that these issues exist, but how easily they can remain invisible without tests. For example, broken JWT verification or missing service auth does not necessarily fail fast. The code can continue to work under certain conditions (especially in development environments) while silently relying on unsafe defaults. If no test exercises the failure paths, those assumptions will only be validated in production.

The compliance view helps me narrow where to look next. Instead of scanning the entire PR, I focus on the files tied to these findings: authentication handlers, configuration modules, and service integration points. Those are the same places where I expect coverage gaps to hide, because behavior there often depends on headers, environment variables, or downstream responses that tests rarely simulate unless someone explicitly adds them.

Step 3: Coverage Analysis Shows Almost All New Logic Is Untested

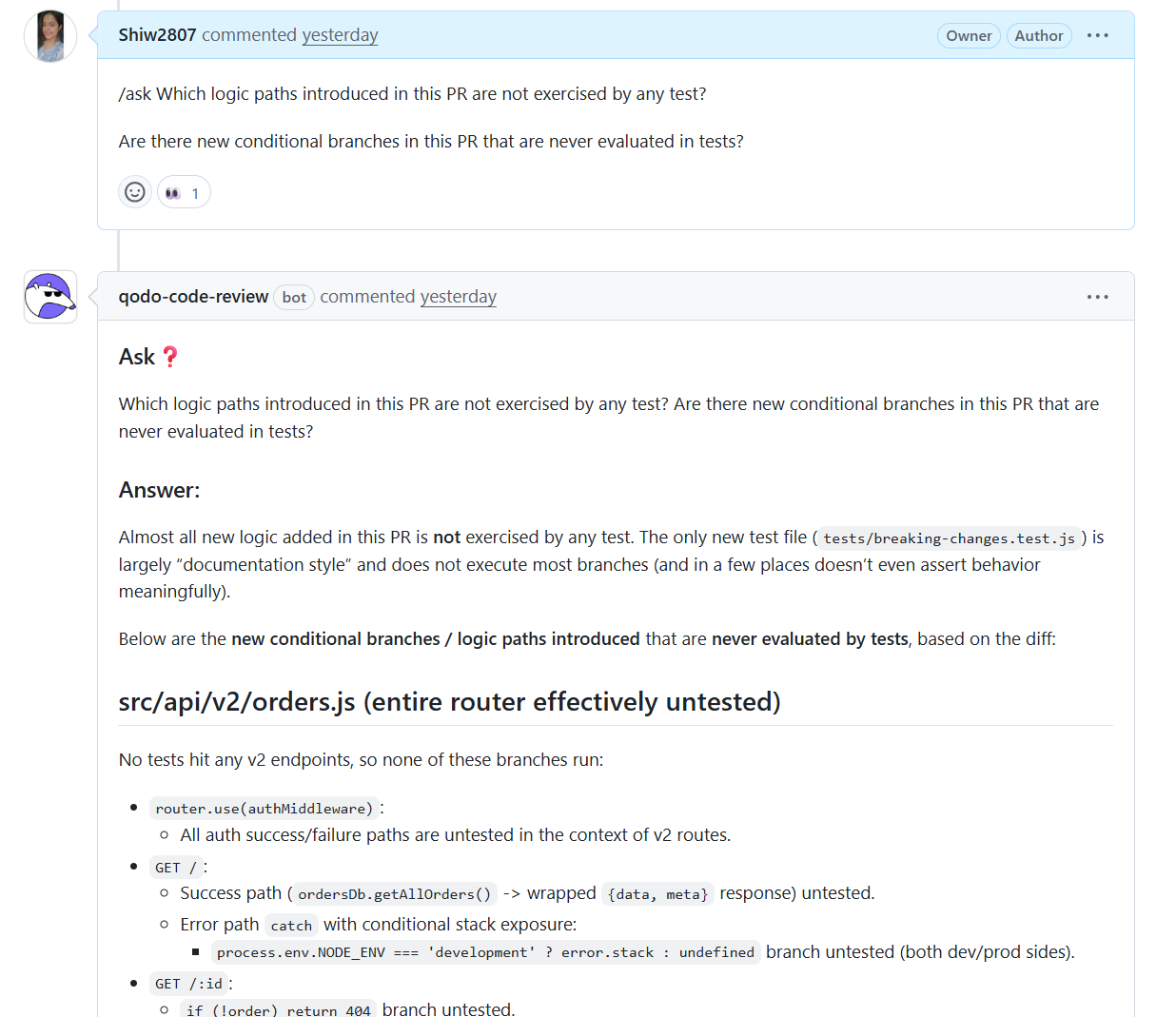

When I ask Qodo which logic paths in this PR are not exercised by tests, the response is direct: almost all of the new logic is effectively untested. Here’s how Qodo responded:

The only new test file added behaves more like documentation than validation and does not execute most of the branches introduced in the diff.

Qodo makes it clear that the entire v2 orders router is untested. No tests hit the new v2 endpoints, which means none of the authentication, request handling, or error paths inside those routes have ever run under test conditions.

Even basic branches are untested:

- Auth success or failure

- Returning a 404 when an order is missing

- Handling errors differently in development versus production

These exist only as assumptions right now.

What matters to me in review is that this feedback is tied directly to the PR. These are not historical gaps or theoretical risks. They are execution paths introduced here that will run for the first time in production if merged as-is. At that point, approving the change becomes a conscious trade-off rather than a blind one.

How AI-Powered Test Coverage Changes Review Decisions

In the above PR, AI-powered test coverage changed the outcome of the review in a very specific way. Without it, this change would have been approved once CI was green and the new test file was present. On paper, the PR looked “covered.” Actually, none of the new v2 routes had ever been exercised.

Seeing that clearly altered how I checked the change. The fact that authentication middleware, request handlers, and error paths in the v2 orders router had zero execution under tests meant that approving this PR would effectively push the first execution to production. That is not an abstract risk. These routes sit on a request path and interact with downstream services, which makes failures both visible and expensive.

Making Trade-Offs Explicit

The coverage feedback also made the trade-offs explicit. Instead of asking for broad test coverage or a full suite rewrite, I could narrow the review feedback to a small group of concrete actions:

- Add a test that hits the v2 router through the auth middleware

- Add a test that validates the 404 path in GET /: id

- Add one failure-path test to validate error handling behavior

Each request was directly tied to the logic introduced in this PR.

Changing the Approval Conversation

This changed the approval conversation. The question was no longer whether the PR “had tests,” but whether the new behavior was safe to run without them. Once the missing paths were visible, approving the PR as-is would have meant consciously accepting that risk. Adding a small number of targeted tests cut that risk significantly without slowing the merge down.

That’s the practical impact for me. AI-powered test coverage doesn’t make reviews stricter; it makes them more precise. It shifts decisions from gut feel to evidence, and it keeps the first execution of new logic out of production and inside the review window, where fixes are still cheap and local.

6 Ways AI-Powered Test Coverage Improves Code Review

Based on my experience using Qodo for test coverage analysis, here are the practical improvements it brings to the review process:

1. Surfaces Untested Logic Before Merge, Not After

Traditional coverage tools show repository-wide percentages. AI-powered coverage shows which logic paths introduced by this specific PR have never run. This shifts the detection window from post-merge to pre-merge, when fixes are cheaper.

2. Makes Risk Explicit Instead of Implicit

Without coverage analysis, approving a PR means implicitly accepting that untested paths might exist. With AI-powered coverage, those paths are named explicitly. The approval decision becomes conscious rather than assumed.

3. Keeps Feedback Scoped to the Diff

Reviewers don’t need to think about the entire codebase. Qodo focuses on what changed in this PR: which branches were added, which error paths were introduced, and which config-dependent logic was modified. This keeps cognitive load manageable.

4. Allows Targeted Test Requests

Instead of asking for “more tests” or “better coverage,” reviewers can request specific tests for specific paths: “Add a test for the auth failure case on line 45” or “Exercise the 404 path in the v2 router.” This makes feedback actionable.

5. Catches AI-Generated Edge Case Gaps

AI-generated code often handles the happy path well but skips error handling, validation, or configuration fallbacks. AI-powered coverage catches these gaps by identifying paths that were added but never exercised.

6. Works Within the PR Workflow

Coverage feedback appears directly in the pull request as inline comments or summary reports. Reviewers don’t switch tools or open dashboards. Everything happens in the same place where approval decisions are made.

Practical Workflow: How to Use Qodo for Coverage Analysis

Here’s how I actually use Qodo during code review to catch coverage gaps:

Step 1: Open the pull request and let Qodo run its analysis automatically (or trigger it manually if needed).

Step 2: Look for the compliance guide and coverage summary in the PR comments. These highlight security issues and untested paths.

Step 3: Focus on logic paths tied to high-risk areas: authentication, error handling, configuration-dependent branches, new API routes, and feature-flagged code.

Step 4: Ask Qodo to highlight which specific branches or conditions in the PR are not exercised by tests.

Step 5: Request targeted tests for the untested paths. Be specific: “Add a test for the JWT verification failure case” rather than “Add more tests.”

Step 6: Re-run the analysis after tests are added to confirm the gaps are closed.

Step 7: Treat unresolved coverage gaps as explicit approval decisions, not implicit risks. If you approve with gaps remaining, document why.

This workflow keeps coverage checks inside the review window, where context is fresh and fixes are local.

2026: Test Coverage Becomes Part of the Review Gate

In 2026, test coverage is no longer something teams check after merge. It’s part of the review gate, analyzed automatically when a PR is opened, and validated before approval.

AI-powered test coverage analysis makes this practical by focusing on the code that changed (not the entire repository) and showing untested paths in real-time during review. This shifts the question from “Is the codebase covered?” to “Is this specific change validated?”

Tools like Qodo implement this by:

- Analyzing the diff to identify new or modified logic paths

- Checking whether any test exercises those paths

- Reporting gaps directly in the pull request as inline feedback

- Keeping remediation scoped to the change (not the entire codebase)

The result: fewer production incidents caused by untested behavior, faster feedback loops (tests are added while context is fresh), and more confident approvals (reviewers know what’s validated and what isn’t).

For engineering teams in 2026, this becomes table stakes. Coverage analysis moves from a post-merge metric to a pre-merge gate, and AI makes it feasible to check every PR without slowing down delivery.

FAQ

1. What is AI-powered test coverage analysis?

AI-powered test coverage analysis checks which logic paths introduced by a pull request are actually exercised by tests. Instead of reporting overall coverage percentages, it focuses on new or modified code and identifies untested branches, conditions, and execution paths before merge. Tools like Qodo analyze the diff, understand which behavior was added, and show exactly which paths have never run under test conditions.

2. Why are coverage percentages not enough during code review?

Coverage percentages measure whether a file or line was executed at least once, not whether new behavior introduced in a PR was validated. When changes modify existing code, coverage can remain stable even though new logic paths have never run under test. For example, a file might be “90% covered,” but if a PR adds a new error-handling branch inside a covered function, that branch can go completely untested while the coverage percentage stays the same.

3. How does AI-powered test coverage help during pull request reviews?

It shows untested execution paths directly in the context of the pull request. Reviewers can see which routes, conditionals, error paths, or configuration branches introduced by the change are untested, making risk visible before approval. This allows reviewers to request specific, minimal tests (like “add a test for the auth failure case on line 45”) rather than vague requests for “more coverage.”

4. What types of issues does AI-powered test coverage commonly uncover?

It often uncovers untested authentication paths, missing error-handling tests, configuration and environment-dependent branches, feature-flagged logic, and new API routes that reuse existing but “covered” infrastructure. It’s especially good at catching gaps in AI-generated code, which tends to handle happy paths well but skip edge cases, validation, and failure scenarios.

5. When should developers use AI-powered test coverage analysis?

It is most effective during code review, before merge. Using it at review time allows teams to add small, targeted tests while context is fresh, instead of discovering gaps later through production failures or regressions. The best workflow is to run analysis automatically when a PR is opened, show gaps during review, add tests to close the gaps, and re-run analysis to confirm they’re closed before approval.

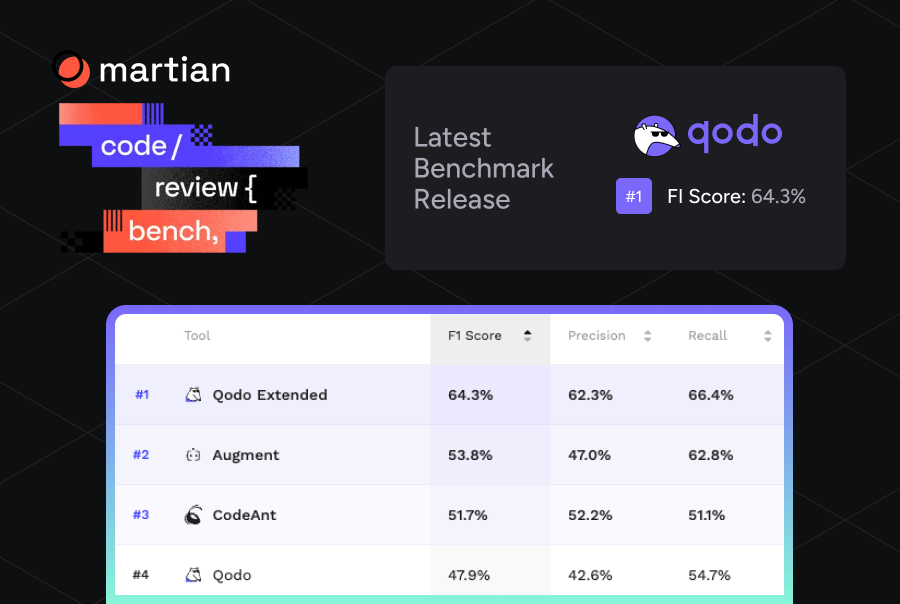

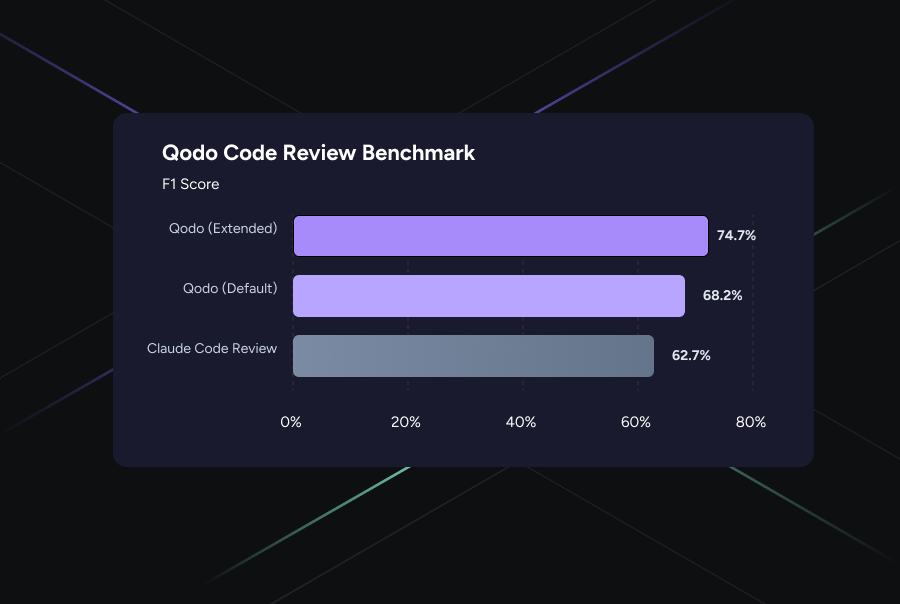

6. How does Qodo’s AI-powered coverage differ from traditional coverage tools?

Traditional coverage tools (like Istanbul, JaCoCo, or Coverage.py) report repository-wide percentages and mark lines as “covered” if they executed at least once. Qodo focuses specifically on the pull request diff, identifying which logic paths were added or modified and whether any test exercises them. It ties coverage directly to the change being reviewed, making gaps actionable during the approval process rather than reporting historical metrics.