5 Python Code Quality Tools Built for 100+ Developer Teams

TLDR;

- Most Python code quality issues are behavioral bugs that pass CI: Silent exception handling, dynamic typing, and overly complex functions hide side effects that fail later in production, not during review.

- Poor code quality slows teams more than lack of features: Developers spend more time understanding and avoiding existing logic than shipping new work. Reviews become “don’t touch this” discussions instead of correctness checks.

- Inconsistent patterns across repositories make collaboration arduous: Each team reimplements similar logic slightly differently, turning PR reviews into style debates instead of safety checks. Over time, this reduces confidence and delivery speed.

- The 5 tools evaluated: Qodo, Pylint, Flake8, Bandit, and MyPy, compared through an enterprise lens to understand where each fits in a modern code quality stack, and how they complement each other rather than replace one another.

Enterprise teams building software with Python are shipping code faster than their review and governance models were built to sustain. Faros AI’s 2025 study shows that teams using AI complete 21% more tasks and merge nearly 98% more pull requests, while review time can grow by up to 91%.

That shift doesn’t mean existing quality tools are obsolete. Linters, type checkers, and security scanners still play a foundational role in preventing low-level mistakes early. The real challenge at scale is that no single tool covers every layer of code risk.

Enterprise teams need a layered approach, a code quality stack, where traditional static analysis tools work alongside higher-level review and governance systems.

Working in large Python codebases, I keep seeing the same quality issues resurface frequently. Most of them were bumped into production later in forms of inconsistent behaviour across services or refactors nobody wanted to touch. In the same discussion, I came across a Reddit thread that discussed common Python mistakes. Some of the most notable ones were these:

- Mutable default arguments,

- Overly broad except: blocks,

- Misuse of globals and unclear contracts between functions,

- Tightly coupled functions make refactoring risky, so teams avoid changing code that clearly needs cleanup,

- Dynamic typing lets invalid data move through multiple layers before breaking far from the source

It’s important to be clear upfront: these tools are built for different purposes. Linters like Pylint and Flake8 enforce style and catch syntactic issues. MyPy enforces type discipline, whereas Bandit focuses on security misconfigurations.

Qodo operates at a different layer, inside pull request workflows, reasoning about behavioral risk, cross-repo patterns, and review consistency. This isn’t about replacing linters. High-performing teams use them together.

What to Look for in a Python Code Quality Tool

For enterprise teams building with Python and its frameworks, code quality tools need to do more than bring up issues. They need to support consistent enforcement, scale across repositories, and reduce review burden without introducing noise. The criteria below reflect what matters in production environments where Python is used across multiple services, teams, and CI pipelines.

1. Context-Aware Analysis That Understands Systems, Not Just Files

Most Python quality problems are not local to one file. They sit at integration boundaries, service calls, shared utilities, implicit contracts, and duplicated logic across repositories.

A useful tool needs to understand how a change interacts with code outside the current diff, because that is where production risk usually appears.

What this looks like in reality:

A developer updates a Python service that calls a downstream payments API.

response = requests.post(

"http://payments/charge",

json=payload

)

if response.status_code == 200:

return response.json()["id"]

Here, the tests pass, linting passes, and the type checks pass.

A file-level tool sees nothing wrong. In production, this breaks when the payments service starts enforcing auth headers or changes its response shape. The real issue was never in this file alone; it was in how this code diverged from the shared client used elsewhere. Without cross-repo context, that risk is invisible during review.

2. Enforcing Quality Where Merge Decisions Actually Happen

Quality controls only work if they sit where merge decisions are made. Tools that run after the fact or only as advisory comments do not scale once PR volume increases. In practice, teams need the ability to gate merges when changes violate standards, contracts, or known patterns.

This is especially important for Python, where dynamic typing and flexible error handling allow broken behavior to pass CI quietly. If enforcement does not happen at review time, duplicated logic, unsafe patterns, and missing validation land in main branches by default.

3. High-Signal Feedback Instead of Review Noise

High-volume teams can’t afford tools that flood reviews with style issues or cosmetic warnings. What slows teams down are code behavioral problems: silent exception handling, missing validation, fragile tests, unsafe assumptions, or duplicated logic.

A high-quality tool highlights issues that change runtime behavior or long-term maintainability. It helps developers answer “is this safe to merge?” instead of debating formatting or naming. Anything that distracts from that question becomes noise at scale.

4. CI/CD Integration That Strengthens Existing Signals

Python teams already run complex CI pipelines. A quality tool needs to reinforce existing signals, not add another brittle step engineers learn to ignore. The most useful tools explain why something is risky even when CI is green.

Top 5 Python Code Quality Tools for Enterprises

| Criteria | Qodo | Pylint | Flake8 | Bandit | MyPy |

| Pull request–native review | Yes | No | No | No | No |

| Merge gating and enforcement | Yes | Limited | Limited | Limited | Limited |

| Cross-repository context | Yes | No | No | No | No |

| Behavioral and integration risk analysis | Yes | No | No | No | No |

| Test quality and missing test detection | Yes | No | No | No | No |

| Security analysis with system context | Yes | No | No | Limited | No |

| Scales across multi-repo enterprise codebases | Yes | Limited | Limited | Limited | Limited |

1. Qodo

Qodo is the best AI Code Review Platform built for enterprise Python teams dealing with large, multi-repository systems. Qodo reviews changes in context, not just within a single file or repository. It understands how a Python change interacts with shared libraries, downstream services, and existing patterns across the organization, which is where most real production issues come from.

It fits into the existing workflow without adding extra steps. Reviews happen directly in the pull request, and Qodo can reference Jira or Azure DevOps tickets to check whether the change actually matches the intended scope. From a code quality standpoint, this helps keep behavior, tests, and standards consistent across repositories, especially when review load is high and teams are moving quickly.

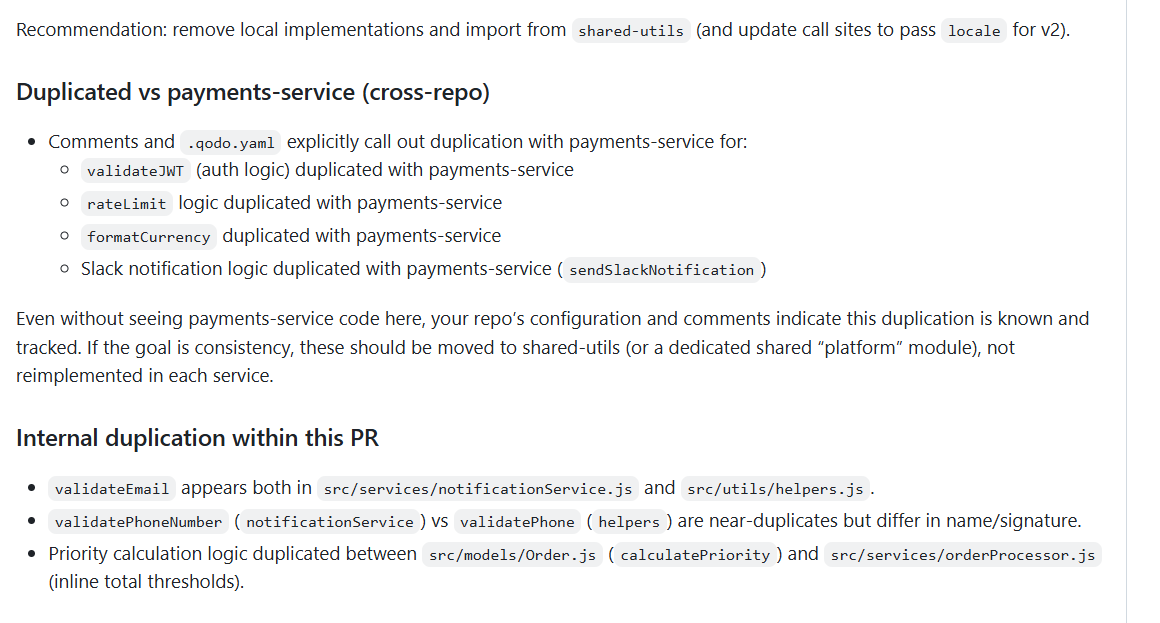

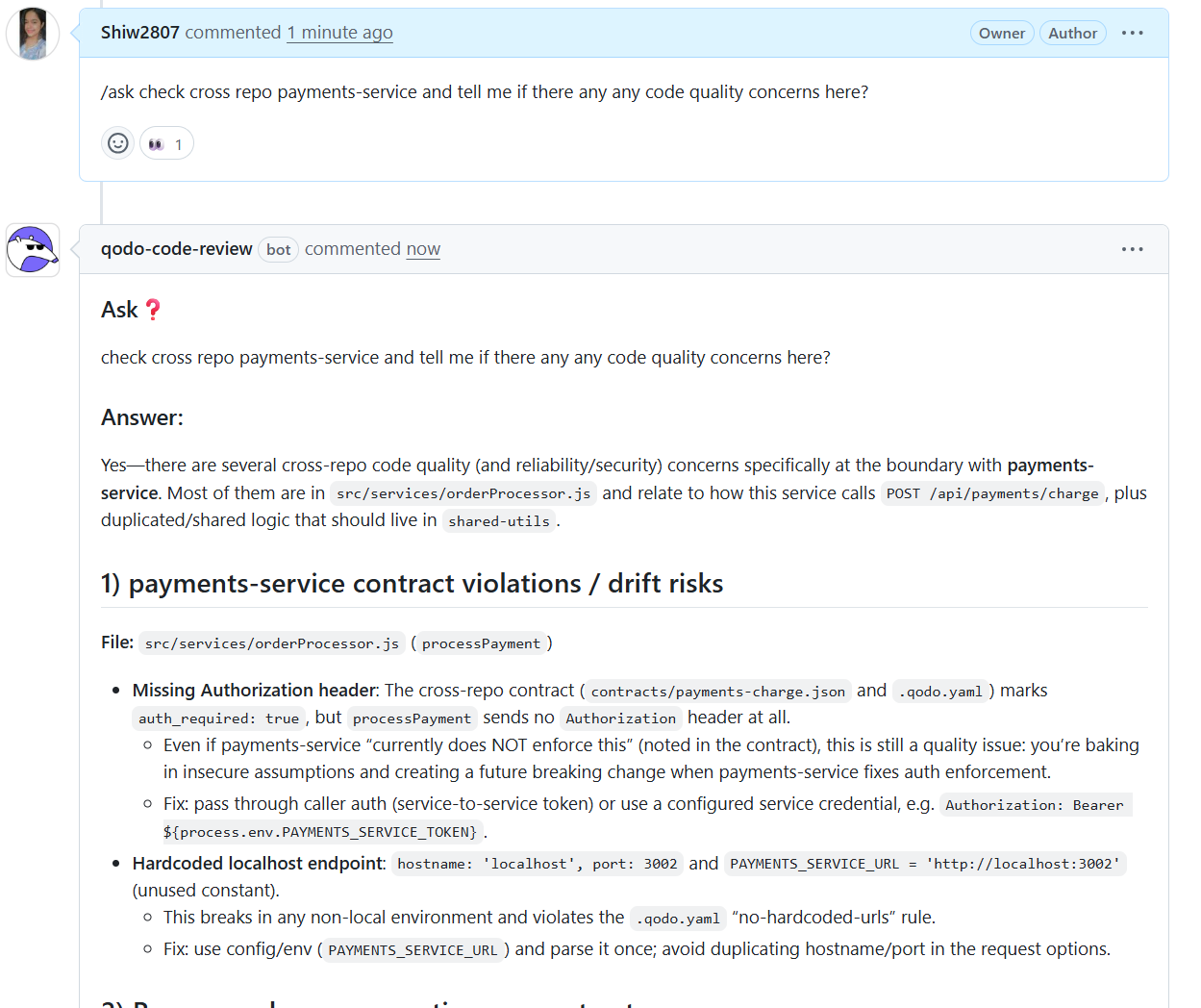

Hands-on: Catching Cross-Repo Duplication and Drift With Qodo

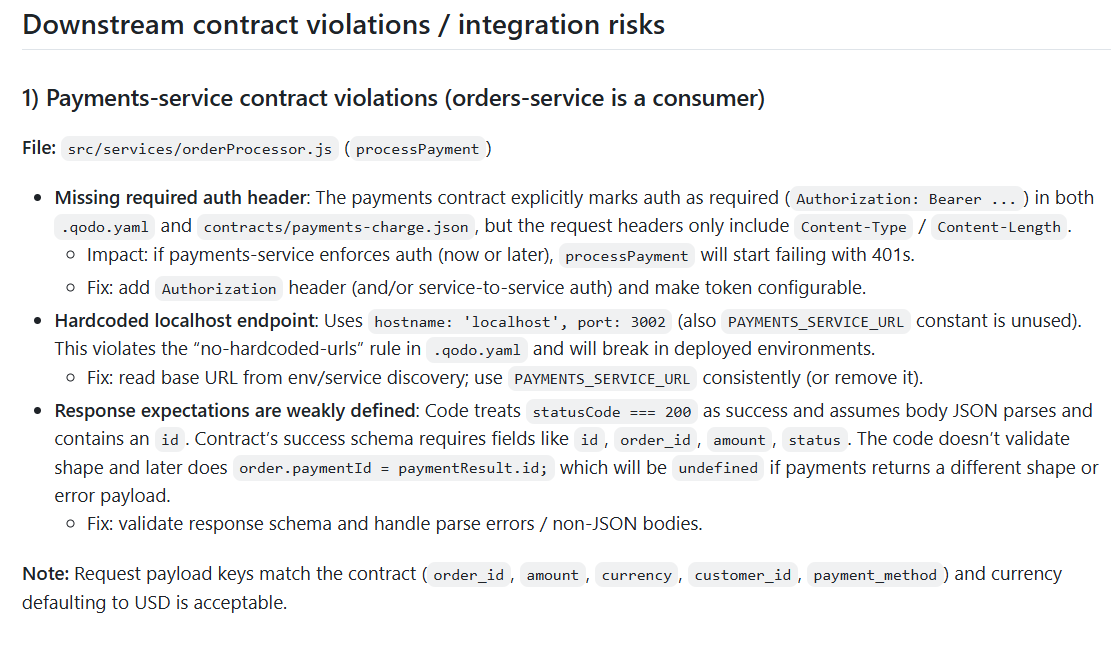

When I ran this PR through Qodo, I asked it to reason across repositories. The first thing Qodo showed was downstream contract violations with the payment service. It tied findings back to the exact function and file where the integration happens. Qodo explicitly called out that even if the payment service doesn’t currently enforce authorization, the absence of an Authorization header bakes in a future breaking change.

It also flagged response handling problems easy to miss during manual review. The code assumed statusCode === 200 and a specific response shape, but the contract clearly requires fields like order_id, amount, and status. Qodo pointed out that if the downstream service changes its response or returns a non-JSON payload, this logic will silently fail.

What made this valuable at enterprise scale is that Qodo did this without needing the downstream repository checked out locally. It inferred expectations from declared contracts and repository configuration. In environments with hundreds of repositories, this is the difference between theoretical governance and something enforceable.

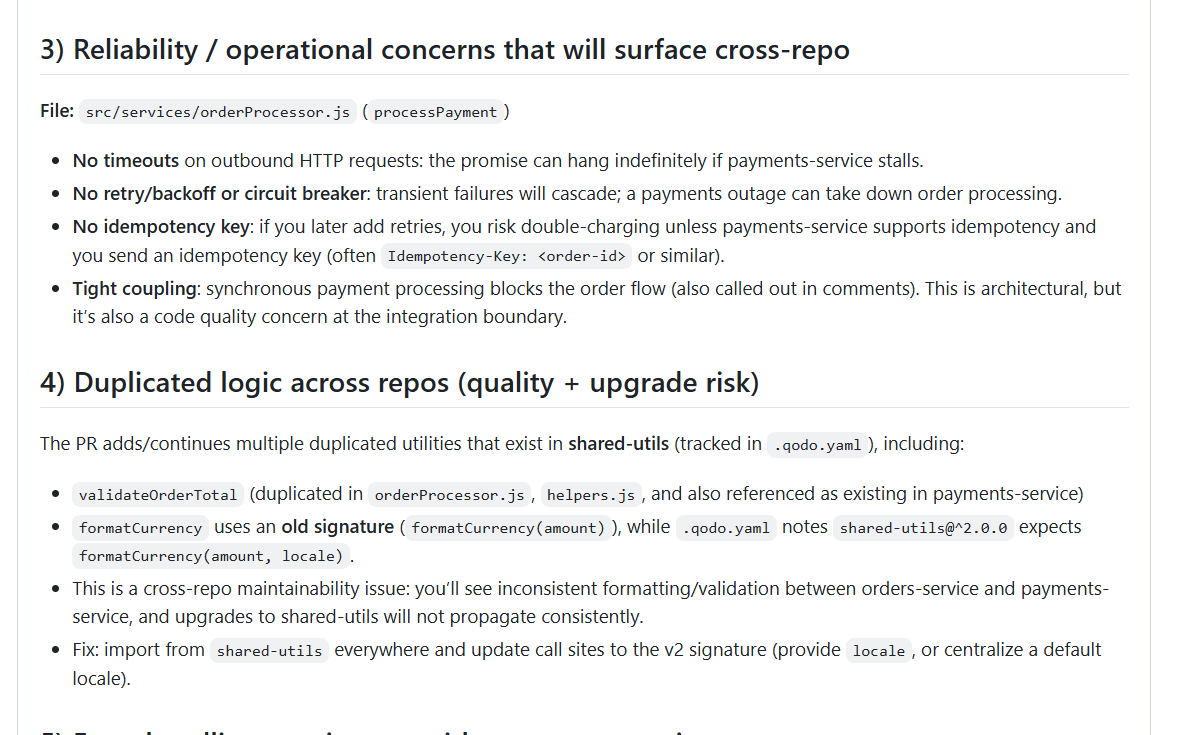

Qodo identified duplicated logic across services, including authentication helpers, rate limiting, currency formatting, and notification utilities. These were not flagged as generic “duplication warnings.” Instead, Qodo tied them back to known shared libraries and even noted version mismatches in expected function signatures.

This matters because duplication across repositories creates long-term upgrade risk. When shared utilities change, duplicated logic doesn’t. That leads to inconsistent behavior across services and makes platform upgrades painful. Qodo made this risk explicit and actionable by recommending exactly where logic should live and how call sites should be updated.

Pros

- Context-rich review that reasons beyond file-level or diff-only analysis

- Cross-repo visibility across shared Python libraries and services

- Pull request enforcement with merge gating based on quality and policy rules

- Ticket-aware logic that validates scope against Jira or Azure DevOps items

- Detects missing or brittle tests rather than relying on pass or fail signals

- Scales across multi-repo, polyglot enterprise environments

- Enterprise deployment options, including VPC, on-prem, and zero retention

Cons

- Requires initial repository indexing to build context

- Rule calibration is needed to align enforcement with organizational standards

- Not intended as a lightweight, developer-only linting tool

Pricing

- Free: $0/month, limited credits

- Teams: ~$30/user/month, collaboration features, private support

- Enterprise: Custom pricing, SSO, analytics, multi-repo context, on-prem

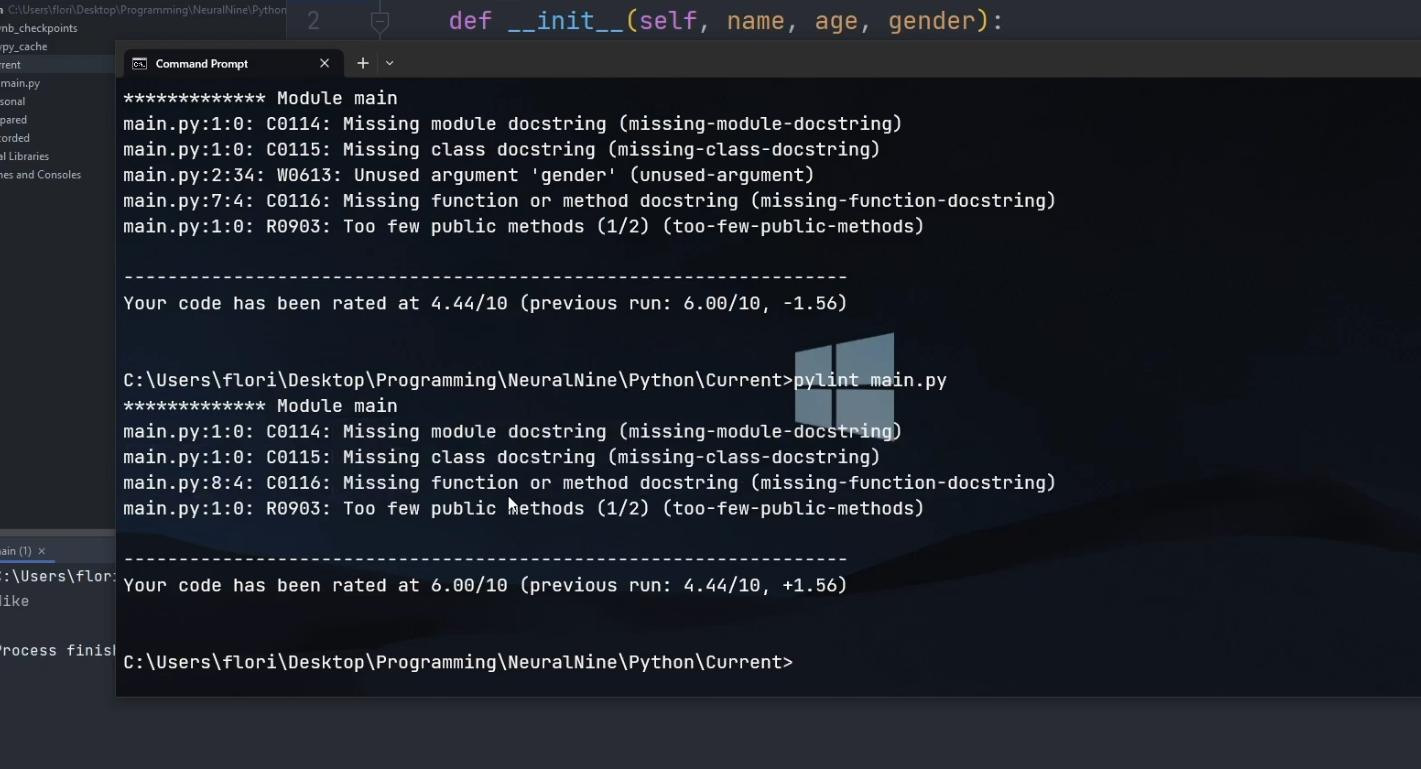

2. Pylint

Pylint is a static analysis tool for Python that evaluates source code against a large group of predefined rules covering syntax errors, coding standards, and common programming mistakes. It operates by analyzing Python files directly and assigning scores based on rule compliance, making it a common choice for teams that want explicit enforcement of style and structural conventions.

In enterprise environments, Pylint is typically used as part of CI pipelines to enforce baseline coding standards. It functions at the repository level and does not reason about pull request intent, cross-repository impact, or downstream behavior.

Hands-on with Pylint: What It Flags In Codebase

When I ran this code through Pylint, the output was entirely rule-driven. It flagged missing module, class, and function docstrings, unused arguments, and design-related warnings such as “too few public methods.” It also generated a numeric score, which reflects how closely the file adheres to the configured ruleset. This makes it straightforward to enforce baseline Python conventions and spot obvious structural issues early.

In hands-on use, Pylint works well as an early static check during local development or in CI. It operates strictly at the file or repository level and applies rules consistently, which helps teams maintain a common coding standard across contributors. For catching syntax-level problems, unused code, and violations of agreed-upon conventions, it does its job predictably.

At enterprise scale, Pylint does not provide enough context. It does not understand pull request intent, cross-repository dependencies, downstream impact, or compliance requirements. As a result, Pylint is typically used as a supporting tool, not as a system for deciding whether a change is safe, compliant, or ready to merge.

Pros

- Detects a wide range of Python-specific issues, including unused variables and incorrect imports

- Highly configurable rule set with fine-grained control

- Integrates with most CI systems and Python build workflows

- Widely adopted and well-documented

Cons

- Produces a high volume of findings, requiring significant tuning to reduce noise

- Operates at file and repository scope only, with no cross-repo context

- Does not evaluate behavioral change or test reliability

- Enforcement depends on CI configuration rather than PR-level governance

- Limited usefulness in large, multi-repo environments without heavy customization

Pricing

Free and open-source

3. Flake8

Flake8 is a Python linting tool that combines multiple checks into a single execution, primarily focusing on style enforcement, basic correctness issues, and adherence to PEP eight conventions. It is commonly used to provide fast feedback during development or as a lightweight gate in CI pipelines.

In enterprise settings, Flake8 is typically applied to individual repositories to enforce consistency. It does not analyze runtime behavior, cross-repository impact, or pull request intent, and its findings are limited to the scope of the files being checked.

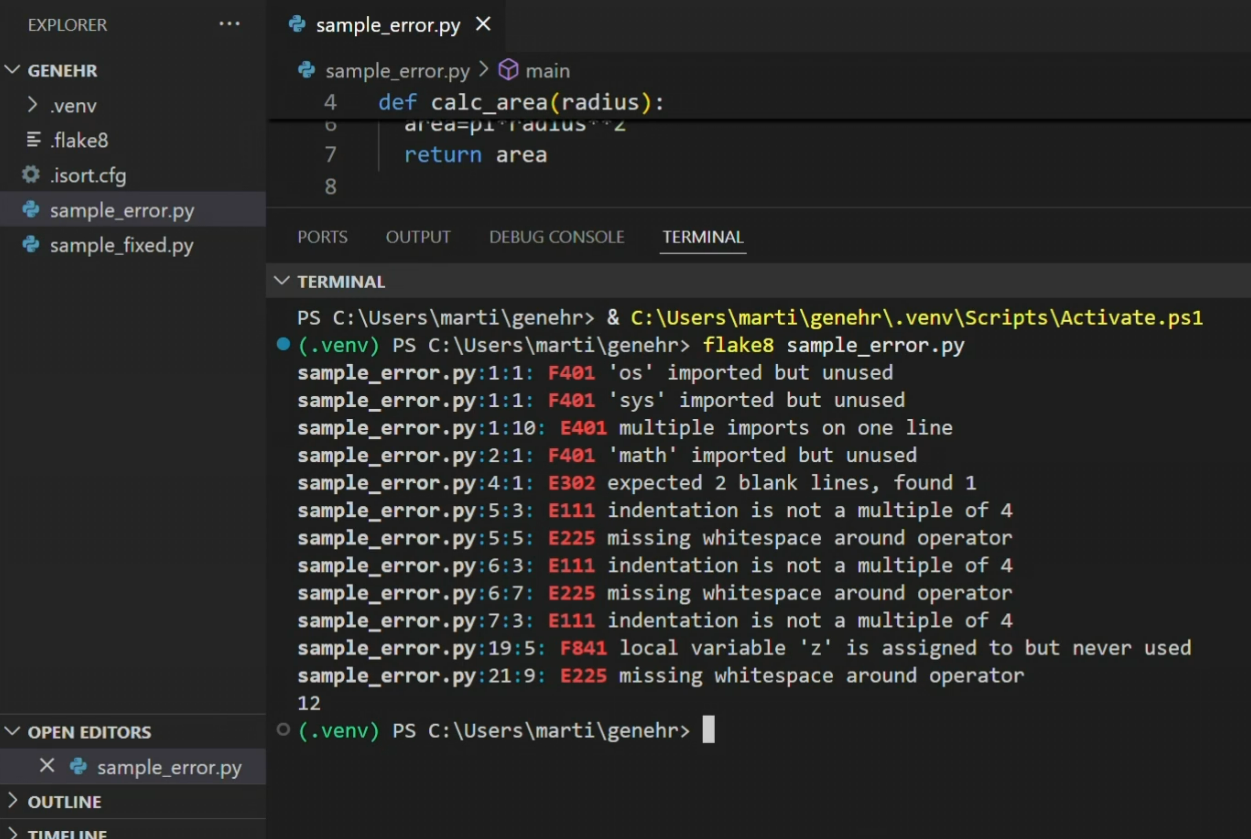

Hands-on with Flake8: What It Flags

When I ran Flake8 against this file, the feedback was immediate and very granular. It flagged unused imports, multiple imports on a single line, indentation errors, missing whitespace around operators, and unused local variables. Each issue was tied to a precise line and column, which makes it straightforward to clean up mechanical problems quickly.

In hands-on use, Flake8 works well as a lightweight hygiene check. It is fast, easy to run locally, and effective at enforcing basic formatting and style rules. Many teams use it as a pre-commit hook or a simple CI step to catch obvious issues before code reaches review.

Flake8 is limited by its scope. It evaluates files in isolation and has no awareness of pull request intent, cross-repository dependencies, or runtime risk. Most of its findings relate to style rather than production impact, which means it helps keep code consistent but cannot determine whether a change is safe, compliant, or ready to merge.

Pros

- Detects common Python syntax and style violations

- Fast execution suitable for pre-commit hooks and CI checks

- Supports plugins to extend rule coverage

- Simple configuration and setup

Cons

- Limited to easy analysis and style rules

- No understanding of application behavior or context

- Operates at repository scope only

- Does not support pull request enforcement or governance logic

- Findings can overlap with other linting tools, increasing redundancy

Pricing

Free and open-source

4. Bandit

Bandit is a static analysis tool focused on identifying common security issues in Python code. It scans source files for patterns that may show insecure coding practices, such as the use of unsafe functions, weak cryptography, or improper handling of subprocesses.

In enterprise environments, Bandit is often integrated into CI pipelines as an early security check. It operates on individual repositories and does not incorporate runtime context, pull request intent, or cross-repository awareness.

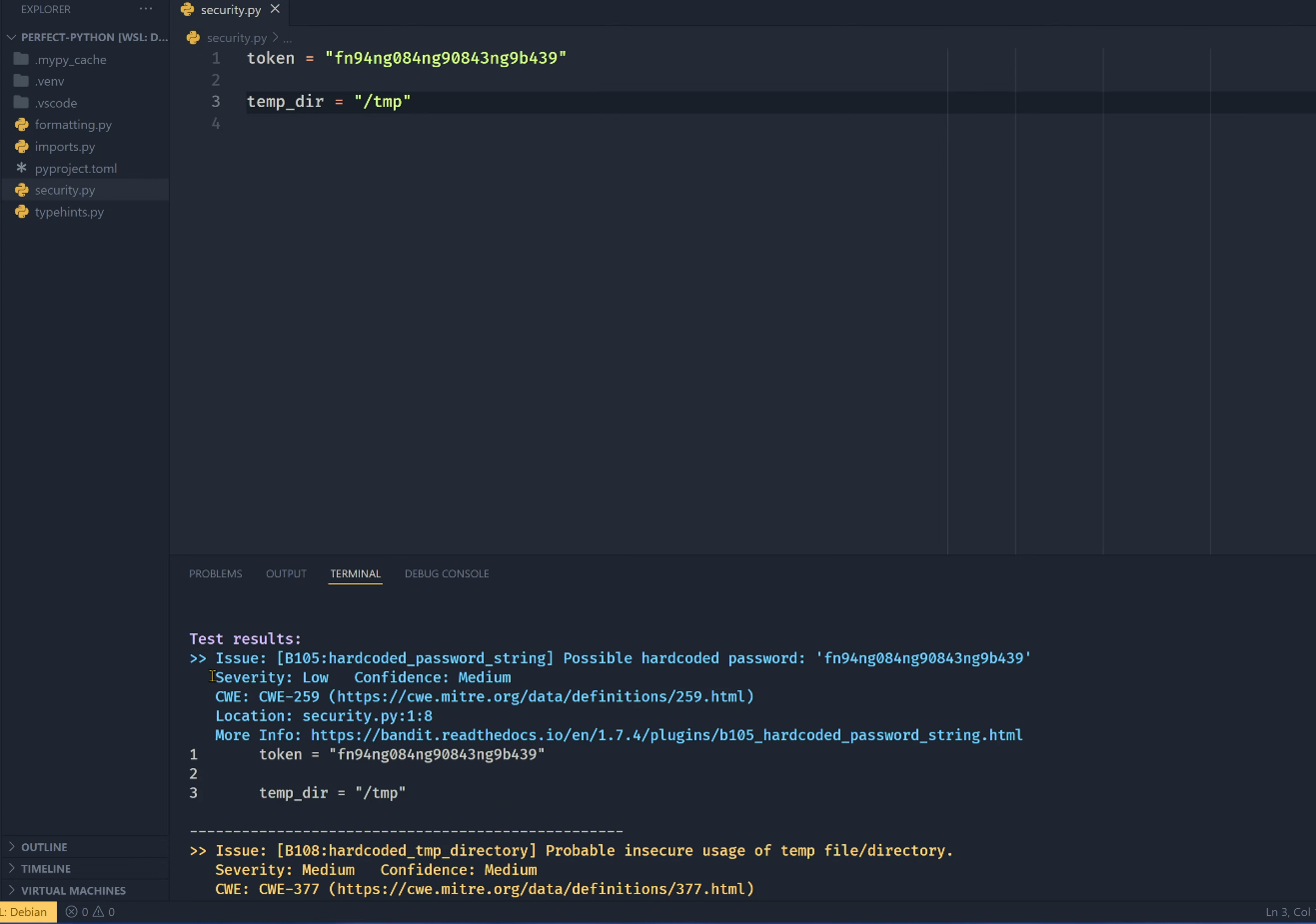

Hands-on with Bandit

In this hands-on run, I scanned a simple Python file using Bandit to check for security-related issues. The tool immediately flagged a hardcoded token and the use of a temporary directory path. Each finding was mapped to a specific CWE, with severity and confidence levels, along with a direct pointer to the exact line of code that triggered the issue.

From a practical standpoint, Bandit is effective at catching obvious insecure patterns early, especially things like hardcoded secrets, unsafe defaults, and risky standard library usage. It fits naturally as a CI step or a periodic security scan to avoid known bad practices from quietly entering the codebase.

Where Bandit is limited for enterprise use is in context. It evaluates files in isolation and relies on rule-based pattern matching. It does not understand whether a value is actually reachable in production, how secrets flow across services, or whether a flagged issue violates an internal policy or contract. This makes it useful for baseline security hygiene, but insufficient on its own for enforcing production-ready security across large, multi-repo systems.

Pros

- Simple to integrate into CI workflows

- Supports configuration to start or disable specific checks

- Focused scope on security-related findings

Cons

- Rule-based detection with limited contextual understanding

- High potential for false positives without careful tuning

- No awareness of application architecture or data flow

- Operates only at file or repository level

- Does not provide enforcement or governance mechanisms

Pricing

Free and open-source

5. MyPy

MyPy is a static type checker for Python that analyzes code using type annotations to identify type-related issues before runtime. It is typically used to catch mismatches in function signatures, incorrect return types, and improper use of variables based on declared types.

In enterprise environments, MyPy is most effective in codebases that have adopted type annotations consistently. It operates at the repository level and does not evaluate runtime behavior, pull request intent, or cross-repository impact.

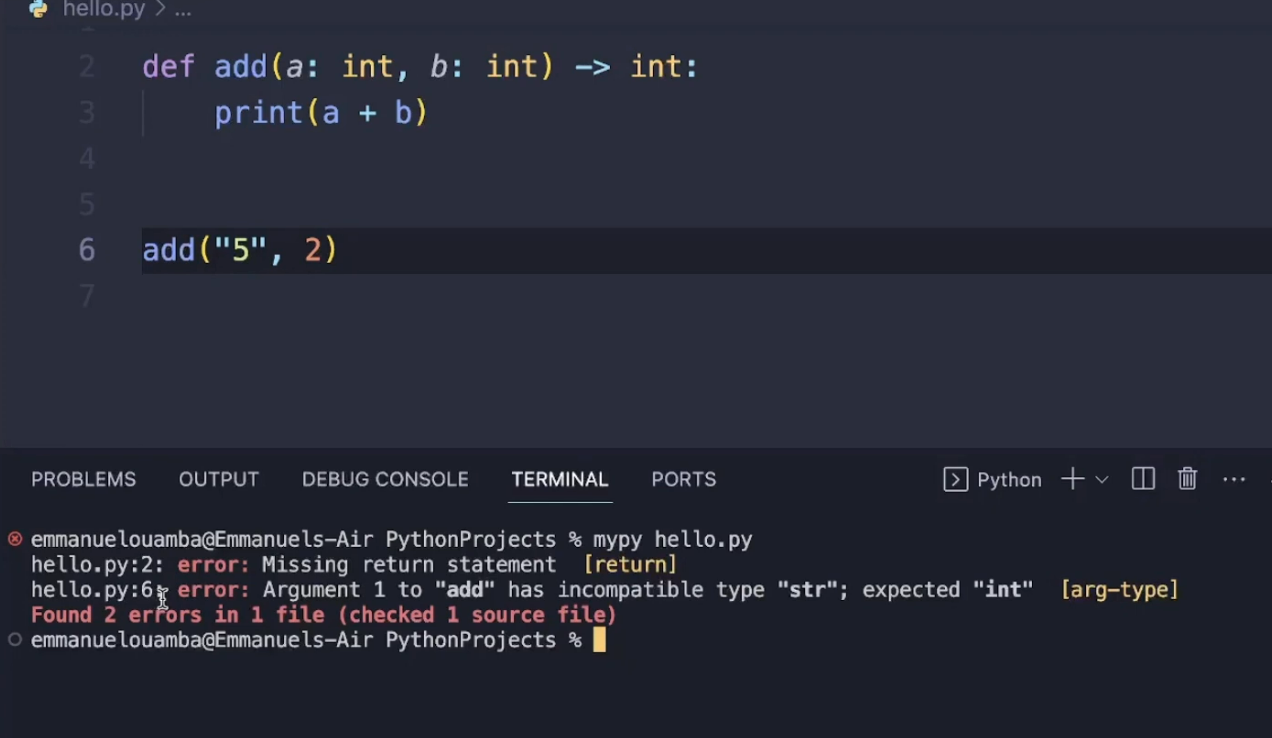

Hands-on with MyPy

In this hands-on example, I ran MyPy against a small Python file that uses type annotations on a simple function. The function is annotated to accept integers and return an integer, but the implementation prints the result instead of returning it, and the function is later called with a string argument.

MyPy immediately flags both problems: a missing return statement and a type mismatch where a string is passed instead of an integer. These are issues that Python itself will only come up at runtime, if at all.

It forces a stricter contract between function definitions and their usage, making type assumptions explicit. In larger codebases, this helps catch integration bugs early, especially when functions are reused across modules or when refactoring changes function signatures without updating all call sites.

However, MyPy’s usefulness depends heavily on discipline and coverage. It only reasons about annotated types and does not understand runtime behavior, business logic, or cross-repository impact.

Pros

- Detects type-related errors that are difficult to identify through testing alone

- Encourages more explicit interfaces and contracts in Python code

- Integrates into CI pipelines and local development workflows

- Supports gradual typing, allowing incremental adoption

Cons

- Requires sustained effort to add and maintain type annotations

- Limited value in loosely typed or legacy Python codebases

- Does not assess logic correctness or security risk

- Operates at file or repository scope only

- No support for review enforcement or governance workflows

Pricing

Free and open-source

Why Enterprises Standardize on Qodo as the AI Code Review Platform?

Qodo is an AI Code Review Platform built for enterprise software teams that need to maintain code quality, security, and governance as development velocity increases. It is not a code generation tool and not an IDE assistant. Its role is to validate code before it reaches main branches by acting as a reasoning and enforcement layer inside pull request workflows.

Importantly, Qodo does not replace linters, static analyzers, or security scanners. Those tools remain foundational for enforcing syntax rules, style consistency, type correctness, and known vulnerability patterns. Qodo operates at a different layer, reasoning about behavioral risk, architectural consistency, and review governance inside pull requests.

Qodo sits between AI-assisted code creation and production delivery. As teams adopt AI coding tools, more logic, configuration, and integration code reaches pull requests faster than traditional review processes can handle.

Linters and static analysis tools still catch rule-based issues early in CI. Qodo complements them by analyzing changes in context during review, enforcing organization-wide standards, and scaling validation beyond what rule-based tools alone can reason about, without increasing reliance on senior engineers or manual AppSec review.

A Missing Quality Layer in the AI Development Stack

AI adoption increased coding velocity across teams: Enterprise teams have already adopted AI to increase coding velocity, but quality controls have not matched at the same pace. Traditional static analysis tools help reduce low-level errors, but they do not reason about cross-repository impact, behavioral changes, or alignment with architectural intent. That is the layer enterprises find missing as AI speeds up development.

Qodo closes the validation gap between coding and production: Qodo fills this gap by acting as the quality and enforcement layer that sits between AI-assisted coding and production delivery. It ensures that faster code creation does not translate into faster risk accumulation.

Code Quality Enforced Across the Entire SDLC

Validation happens directly during pull request review: Qodo operates across the full software development lifecycle, not just at CI or deployment. It validates changes during pull request review and enforces standards consistently across repositories.

Planning intent stays connected to implementation decisions: Qodo connects quality decisions to planning artifacts such as Jira or Azure DevOps tickets, creating continuity between intent, implementation, and approval rather than isolated checks scattered across tools.

Shift-Left Validation That Happens Before Risk Compounds

Risk is detected earlier in the development workflow: Enterprises choose Qodo because it shifts meaningful validation earlier in the workflow.This works in combination with existing static checks, giving teams both automated rule enforcement and contextual review intelligence.

Issues are resolved while remediation cost is still low: Bugs and quality gaps are addressed while context is still fresh, reducing the cost and complexity of fixing problems later in the release cycle or after deployment

Deep Codebase Context Across Repositories and Services

Quality issues rarely stay confined to one repository: In large organizations, quality issues often span shared libraries, services, and contracts. Qodo uses RAG (Retrieval Augmented Generation) to build context across the entire codebase by retrieving trusted information from these sources.

System-level awareness improves review decisions: By bringing cross-repository and dependency context into pull request review, Qodo allows reviewers to understand how a change affects the broader system, even across hundreds of repositories and multiple languages.

How I Use Qodo to Enforce Python Code Quality in Pull Requests

When I review Python code in an enterprise setup, I almost never look at a single repository in isolation. Real risk shows up at service boundaries: how one service calls another, whether contracts drift silently, and whether shared logic is being duplicated instead of reused. This is exactly where most traditional Python quality tools stop being useful, and where Qodo starts to matter.

In this pull request, I explicitly asked Qodo to check cross-repo code quality against the payments-service. Instead of responding with generic style feedback, Qodo reasoned across repositories and surfaced issues that would otherwise slip through review.

Integration boundaries became the starting point for analysis: The first thing Qodo did was anchor its analysis at the integration boundary. It pointed directly to the orderProcessor.js logic that calls POST /api/payments/charge and evaluated that call against the actual cross-repo contract definitions. This immediately exposed missing authorization headers and hardcoded local endpoints.

Future breaking changes were identified early: What made this valuable was not just identifying the issues, but explaining why they matter long term. Even though the payments service does not currently enforce authorization, Qodo flagged this as a future breaking change and a quality risk baked into the code today.

From there, Qodo shifted into operational reliability, which is where most Python reviews fall apart under time pressure. It flagged the absence of timeouts, retries, backoff, and idempotency keys on outbound HTTP calls.

What I really liked about the tool is it handled duplicated logic across repositories. It identified validation and formatting utilities that already exist in shared-utils but were re-implemented locally.

Dependency drift across repositories became visible: Qodo even detected a version mismatch where one repo was still calling an old function signature while the shared library had already moved on. This is the kind of drift that quietly accumulates technical debt and breaks consistency across services. In a system with 50 or 100 repositories, no human reviewer catches this reliably. Qodo did it automatically.

Throughout the review, the feedback stayed structured and scoped. I was not buried in inline comments. Instead, Qodo grouped findings under contract violations, reliability risks, and duplication issues.

As a reviewer, I could immediately see what would block a safe merge and what needed architectural follow-up. That alone saves significant senior engineering time.

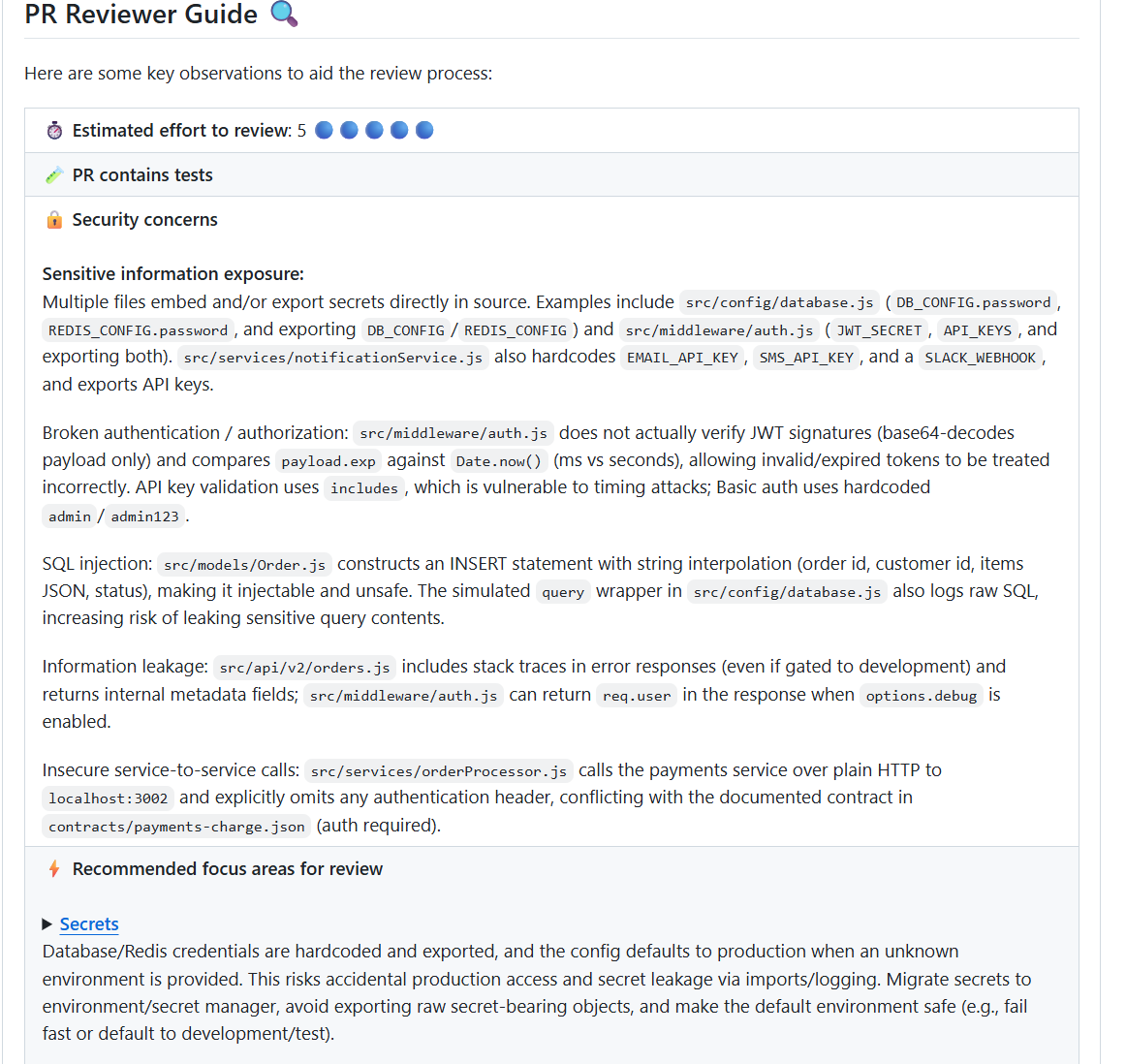

At this stage, I asked Qodo a compliance-specific question directly in the pull request:

/review Is this change compliant with our SOC two or internal service-to-service auth requirements?

Qodo responded by analyzing the change against concrete controls already present in the codebase and service contracts. It flagged multiple issues that would fail a real SOC two review, not just theoretical best practices.

Secret exposure risks were identified in configuration and middleware: Qodo detected hardcoded and exported secrets across configuration and middleware, including database credentials, API keys, JWT secrets, and webhooks. The risk explanation was clear: exporting secret-bearing objects and unsafe defaults increases the likelihood of accidental production access and credential leakage.

Authentication controls were shown to be ineffective in reality: Qodo surfaced broken authentication logic where JWT signatures were not actually verified, expiration checks were incorrect, and insecure comparison methods were used. From a compliance standpoint, this means authentication controls exist on paper but not in effect.

On the service-to-service side, it flagged unauthenticated HTTP calls to the payments service that violate documented contracts requiring authorization. This directly conflicts with internal service-to-service auth requirements.

Enforcing Python Code Quality Is About Governance Beyond Tooling

Enterprise Python code quality is no longer constrained by tooling gaps but by the inability of review and governance processes to scale with development velocity. As AI-generated code speeds up change, traditional linters and static analysis tools remain necessary but insufficient on their own. They catch issues early, yet they do not enforce standards consistently or reason about risk in context.

This is why many enterprises introduce Qodo as the AI Code Review Platform that sits between code creation and production. By enforcing quality, security, and compliance directly inside pull request workflows, Qodo turns review into a system rather than a manual safeguard. The result is Python code that is not only correct but consistently production-ready at scale.

FAQ

1. What makes Python code quality harder at enterprise scale?

Enterprise Python systems span many repositories, teams, and shared libraries. Review depth varies, context is fragmented, and governance depends too heavily on manual enforcement. As AI-assisted coding increases volume, these gaps show up most clearly during pull request review.

2. Are linters and static analysis tools still useful for Python teams?

Yes. Tools like Pylint, Flake8, Bandit, and MyPy remain important for catching specific classes of issues early. Their limitation is that they operate in isolation and do not enforce quality or compliance consistently at merge time across large codebases.

3. How does Qodo differ from traditional Python code quality tools?

Qodo is an AI Code Review Platform, not a linter or scanner. It checks Python changes in context, enforces standards inside pull request workflows, validates scope against tickets, and blocks unsafe merges when requirements are not met.

4. Can Qodo work alongside existing Python quality tools?

Yes. Qodo does not replace linters or security scanners. It orchestrates their signals and adds reasoning, context, and enforcement at review time, allowing enterprises to scale quality without increasing manual review effort.

5. Why do regulated enterprises adopt Qodo for Python code review?

Because Qodo enforces quality and compliance directly in the development workflow. It supports consistent standards across repositories, creates auditable review artifacts, and cuts reliance on individual reviewer judgment, which is critical for SOC 2, ISO, PCI, and HIPAA environments.

6. How do large Python teams decide whether a pull request is safe to merge?

They move away from relying on individual reviewer intuition and instead enforce rules inside the pull request. A change is considered safe when it meets internal standards, respects service contracts, aligns with the linked ticket, and does not introduce new behavioral or security risk, even if tests pass.

7. What signals actually matter when reviewing Python code at scale?

The highest-impact signals are behavioral: silent exception handling, missing validation, unsafe defaults, contract violations, duplicated logic, and tests that only cover happy paths. Formatting and style issues rarely correlate with production incidents.