AI Coding Needs a Centralized Context Plane and Verification

At AI Engineer Miami 2026, I gave a talk about code quality gates for AI-assisted development. Afterward, I spoke with engineers from the largest enterprises, and they pointed to the same failure mode in agentic engineering: their teams lacked a reliable way to ensure the same engineering standards reached every agent, every repo, and every step in the workflow.

The Centralized Context Plane

AGENTS.md, CLAUDE.md, internal docs, review checklists, PR comments, and tribal knowledge are easily treated as different categories of information. But they’re all under the same umbrella. They are types of context.

The issue is that when context is scattered across laptops, wikis, old pull requests, and people’s heads, the codebase starts to drift. One engineer’s agent follows one set of rules. Another engineer’s agent follows a different prompt. A third team catches problems only in review. In this common scenario, context has no operating model. And this is a direct reflection of code quality when shipping software with AI.

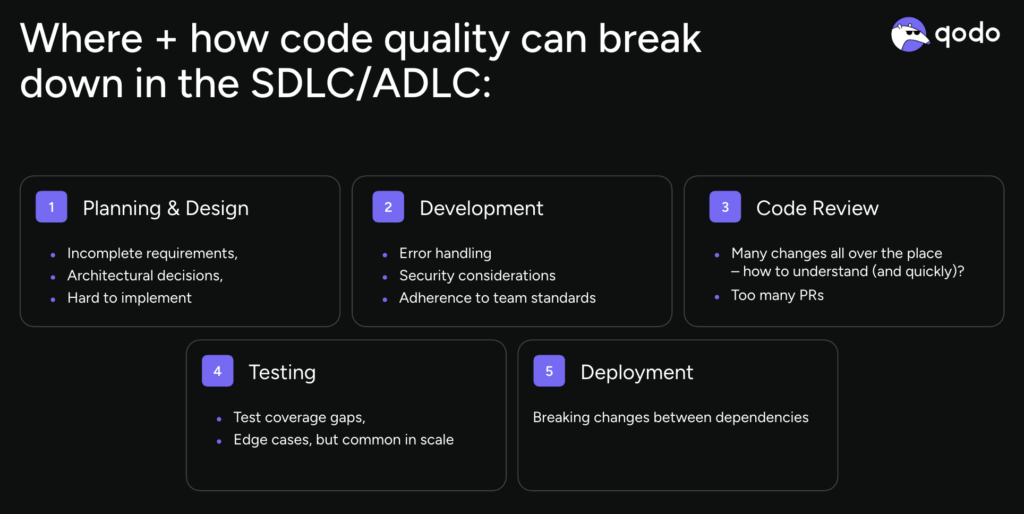

That problem can also appear early in the development lifecycle. Code quality can break down in planning and design, in development, in review, in testing, and in deployment. By the time a pull request is open, some of the most expensive mistakes have already been made.

This is why I keep coming back to the idea of a centralized context plane. By that, I mean one operational layer where engineering standards, repo rules, review criteria, architectural constraints, domain requirements, and team-specific patterns live in a form that tools can use. If a rule matters, it should be available to the agent that plans the work, the CLI that runs local review, and the system that evaluates the pull request.

“Written somewhere” is not the same as operationalized everywhere. AI makes this worse by increasing code volume before most teams have improved the reliability of their context.

The Verification Layer

The next missing piece is the verification layer. A context plane without verification becomes another library of good intentions. This is why a verification layer is critical for AI coding. And it has to start where a developer’s work begins. In the process and the interfaces in which we work.

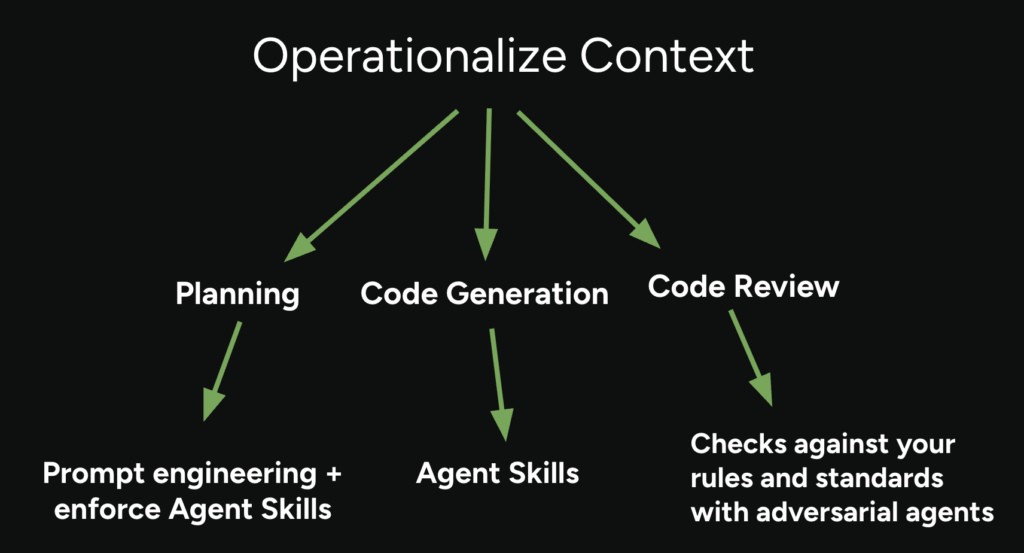

A verification layer is what applies the same context during planning, code generation, local review, pre-PR validation, and PR review. It is the system that says: these are the standards, and here is where we check them before bad patterns harden into the codebase.

The clearest way I know to explain it is the same way I framed it in my talk:

- Define what code quality means to you.

- Decide where those quality standards live.

- Design the verification layer where engineers already work.

A simple example: teams often keep AGENTS.md, repo rules, and review criteria in separate lanes. One file guides the coding agent. Another system holds review rules. Human reviewers fill the gaps from memory. That setup guarantees drift.

The better approach is to treat all three as a single context system and use it early.

- If a change touches authentication, background jobs, or API contracts, the agent should pull the relevant standards before it writes code.

- Local review should check the diff against those same rules before opening a PR.

- CI should apply the same expectations again, so human review starts at the architectural and product level instead of spending cycles on issues that should have been caught earlier.

That is also why I think context engineering needs a broader definition than the one it currently gets. It is the work of codifying standards, centralizing them, scoping them to the right repos, and making them executable in the tools engineers already use. A limited context engineering model does not scale across distributed teams. When senior engineers tell me their teams are getting inconsistent results from different agents, I hear a context distribution problem, something that is amplified by LLM/agent stochasticity.

If I were giving developer teams three practical takeaways from my time at AI Engineer Miami, they would be:

- Treat every standard as context, even if it currently lives in a PR comment or somebody’s head.

- Put that context in one operational plane instead of scattering it across local files and folklore.

- Then verify against it before human review, not after the code has already taken a full trip through the workflow.

That is the part of AI-assisted development that I think deserves more attention this year. If AI increases code volume, teams need a system that makes standards executable. Otherwise, the failure mode is predictable: faster output, less consistent judgment, and more cleanup pushed downstream.

This is the direction I care about at Qodo. We help turn coding rules into something agents, local workflows, and review systems can all act on. That is how context engineering is operationalized and how verification is enforced to ship higher-quality software.